C-A2Meet: The CHI Poster That Asked Why Meeting Interfaces Stay So Rigid

My CHI 2026 poster, C-A2Meet, asks a different question: instead of bolting AI onto meetings, what if the interface itself could reshape around people?

INITIALIZING SYNAPSE...

Deep dives into my research papers, philosophical explorations, and thoughts on the intersection of human cognition and artificial intelligence.

My CHI 2026 poster, C-A2Meet, asks a different question: instead of bolting AI onto meetings, what if the interface itself could reshape around people?

My CHI 2026 full paper, Cognitive Bridge, started with one stubborn question: can AI catch designer-developer misunderstandings before they turn into rework?

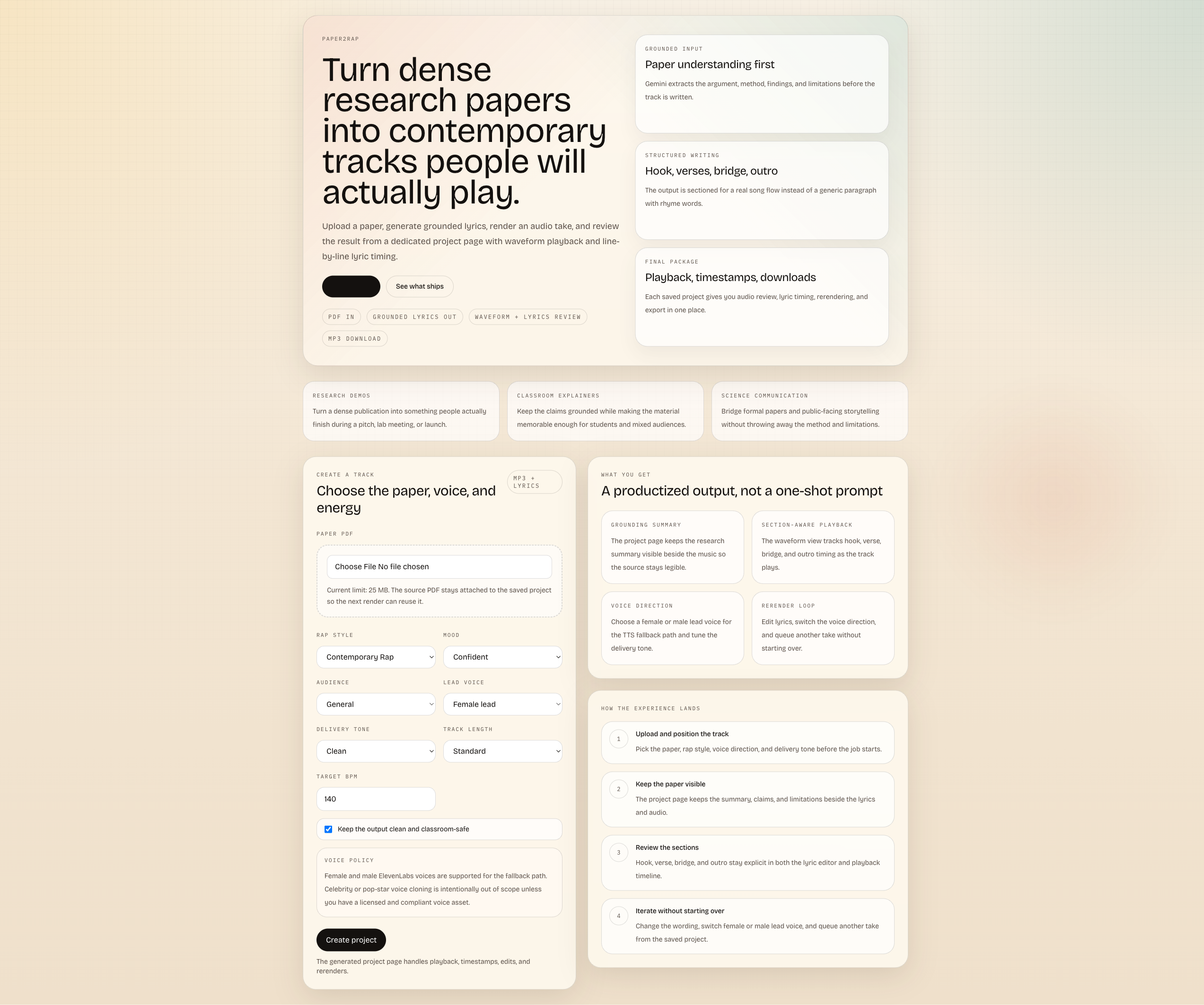

I built Paper2Rap during a flight: a research-to-rap studio with a working local path, but the online Gemini + ElevenLabs stack still gives the best results.

A full technical blueprint for prompt-to-campaign automation using coordinated agents that generate visual, copy, and video assets at production speed.

I participated in a Human Food Interaction workshop run by the Inclusive Reality Lab and used the experience to build three working prototypes: a digital sensory table, a bio-adaptive dining system, and an AR food perception app.

A candid engineering journal from an intense internship: shipping production integrations, solving hard edge cases, and learning how real AI systems succeed.

An HCI perspective on agent platforms: why optimizing for machine metrics alone increases cognitive load, weakens trust, and harms human decision quality.

A practical blueprint for evaluating LLM agents with algorithmic metrics, LLM-as-a-judge methods, and human review to reach robust real-world reliability.

A production playbook for monitoring agent quality over time, from operational telemetry to task-level diagnostics and continuous self-optimization loops.

A deep dive into how Autohive coordinates AI agents, tools, and real business outcomes — from the inside of a startup building production-ready agent systems.

A retro terminal life-sim that helps newcomers navigate housing, health, work, and belonging in Aotearoa, built with Vite, Zustand, and optional LLM scenarios.

It was 3 AM on a Tuesday. I was staring at my laptop, exhausted, wondering where my day went. That night, I decided to build something to find out.

My supervisor YunSuen Pai brought me a painful question: how do we search thousands of CHI PDFs without losing trust, privacy, or rigor? I built a fully-local RAG assistant to answer that.

The full story of the boy who loved food, the student who forgot his body, and the hospital bed that changed everything.

How I applied my researcher mindset to my own biology. The data, the PRs, the injuries, and the transformation from 100kg+ to 70kg.

The exact daily routine, diet, training split, and tech stack I use to optimize my biology. Backed by science and battle-tested.

A system for real-time visualization of flow state to enhance workplace productivity.

What if your virtual meeting had an AI facilitator that could sense when the team is struggling and step in to help? That's exactly what I built with CLARA.

What if you could send a hug through your phone? We studied how people create and interpret emotional touch sensations-and discovered everyone speaks a different haptic language.

Exploring the synergy between human creativity and AI through interactive particle systems.

A comprehensive overview of foundational theories in Human-Computer Interaction, Human-AI Interaction, and behavioral science.

What if your AI assistant could sense when you're frustrated before you even say it? Exploring empathetic conversational agents.

I created one of the largest datasets for understanding how stressed and emotional people get during video calls. Here's why that matters for the future of remote work.

Exploring James Allen's timeless wisdom on the power of thought and its implications for researchers and creators.

A personal reflection on Viktor Frankl's work and finding purpose through suffering.

Reflections on Paulo Coelho's masterpiece and how it relates to the journey of research and self-discovery.

I built a VR system that lets you not just see your cherished memories, but actually touch and feel them-while it adapts to your emotional state in real-time.

What if everyone in your video call was represented by an adorable seal that moves based on the group's collective behavior? We built it, and it actually worked.

What if your smartwatch could detect exactly where you touch your own hand, giving you an invisible keyboard always at your fingertips? That's RadarHand.

What if VR experiences could sense when you're stressed, scared, or engaged-and adapt in real-time? That's exactly what we built with CAEVR.

What if your VR collaborator could see when you're stressed or confused through subtle visual cues? We tested whether sharing physiological signals helps or hurts teamwork.

Can VR help people recover from traumatic brain injuries? We built an adaptive system that adjusts difficulty based on the patient's cognitive state.

Can VR build cultural bridges? Exploring how immersive experiences can foster cultural empathy and understanding.

Two strangers enter VR. Their biosignals start changing the world around them. As their rhythms sync, the environment transforms. Can technology create emotional connection between people who've never met?

What if your desk could identify any object you place on it-without cameras, without barcodes? Using radar and tiny reflectors, I built exactly that.

Current VR controllers need big gestures. But what if the tiniest finger twitch could trigger actions? Using radar, we made VR respond to micro-movements.

Would you listen to reminders better if they came from someone you love? We explored the surprisingly emotional world of voice assistant personalization-with all its promise and peril.

Close your eyes and touch your nose. How did you know where it was? That's proprioception-and I figured out how to measure it for the hand to design better wearables.