What If Siri Sounded Like Your Mom?

In simple terms: KinVoices explores what happens when voice assistants use synthesized voices of people you know and love-your mom, your partner, your best friend. We found that familiar voices can make reminders more effective and create emotional connections, but they also raise thorny questions about identity, consent, and the uncanny valley of voice.

🎯 Key Takeaways

- More engaging - users paid more attention to reminders from familiar voices

- More persuasive - people were more likely to act on reminders from "kin" voices

- Emotionally complex - familiar voices triggered both comfort and unease

- Privacy matters - users wanted control over when and how kin voices were used

- Design implications - "almost real" can be more unsettling than "clearly synthetic"

The Question We Couldn't Stop Thinking About

Voice assistants are everywhere now. Siri. Alexa. Google Assistant. They've become remarkably capable-answering questions, controlling smart homes, even having conversations.

But they all share something in common: they sound like strangers.

With speech synthesis technology maturing rapidly, we found ourselves asking: What if your smart assistant sounded like someone you know and love?

Would a reminder from your mom's voice be more effective than one from Alexa? Would an alarm in your partner's voice feel different than a generic beep? Would a voice you love make technology feel more human-or more unsettling?

These questions led to KinVoices.

What We Did

We conducted a two-phase study to understand both attitudes and actual behaviors around familiar voices in technology:

Phase 1: Understanding Attitudes (25 Users)

We surveyed and interviewed 25 people about their reactions to the concept of kin voices in VUIs. Key questions:

- Would you want your assistant to sound like someone you know?

- Who would you choose?

- What tasks would be appropriate for familiar voices?

- What concerns do you have?

What we found:

- VUIs with kin voices were perceived as more engaging and personal

- Higher persuasiveness for reminders, especially health-related ones

- Increased sense of safety and familiarity

- But also significant eeriness when voices weren't quite right

- Strong opinions about appropriate contexts (reminders yes, information lookup no)

Phase 2: Living With Kin Voices (3 Households, 2 Weeks)

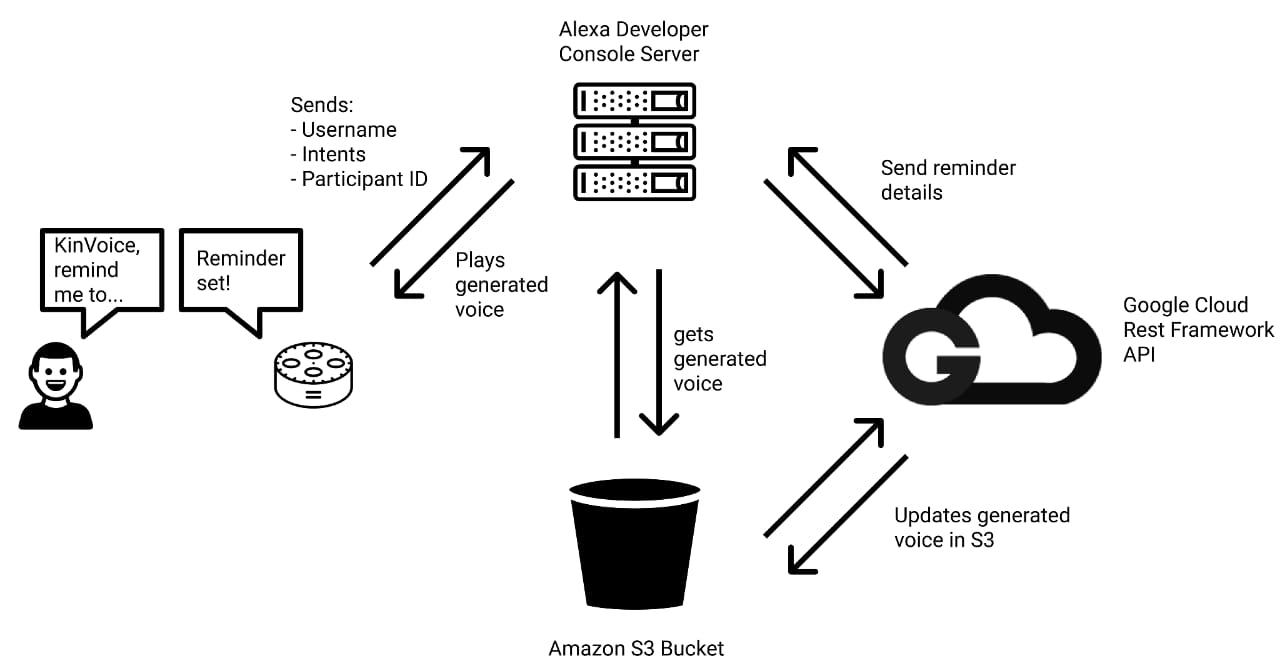

We deployed KinVoice, a modified Alexa-based VUI, in three households for two weeks. The system:

- Let users record voice samples and set reminders in their own voice

- Synthesized voices using state-of-the-art voice cloning technology

- Tracked usage patterns, reminder effectiveness, and emotional responses

- Conducted daily check-ins and exit interviews

What We Discovered

Finding 1: Familiar Voices Are More Effective

Participants responded to reminders from familiar voices more quickly and more consistently than standard Alexa reminders. One participant reported:

"When I hear my wife's voice telling me to take my medicine, it's harder to ignore. It feels like she actually cares, even when she's not home."

The emotional connection translated to behavioral change.

Finding 2: The Uncanny Valley of Voice

Here's where it gets complicated. The closer the synthesized voice got to the real person, the more unsettling minor imperfections became.

When the voice was obviously synthetic (like standard Alexa), users didn't have strong reactions. When it was a perfect replica, users found it natural. But when it was almost right but not quite, users reported feelings of unease, confusion, and even distress.

This suggests a design space where:

- Clearly synthetic kin voices might work better than almost-realistic ones

- Intentional "tells" that mark a voice as synthetic could reduce discomfort

- Expectations need to be set about what users are hearing

Finding 3: Context Matters Enormously

Users had strong opinions about when kin voices were appropriate:

Good contexts:

- Reminders (especially medication, appointments)

- Morning greetings/routines

- Emotional support ("you've got this")

Inappropriate contexts:

- Information lookup ("what's the weather?")

- Transactions ("ordering now...")

- System errors ("I didn't understand that")

The common thread: familiar voices worked for relational interactions, not transactional ones.

Finding 4: Privacy and Consent Are Non-Negotiable

Users had serious concerns:

- Who can use my voice? (Requires explicit consent)

- Can others synthesize my voice without permission? (Scary)

- What happens if someone dies-can their voice still be used? (Complicated)

- Could this enable manipulation or deception? (Yes, absolutely)

These aren't hypothetical concerns-they're real design constraints.

Design Guidelines We Developed

Based on our findings, we propose principles for anyone building kin voice features:

1. Transparency First

Always indicate when synthetic voices are being used. Users should never be deceived about whether they're hearing a real person or a synthesized voice.

2. Explicit Consent

Both the person being synthesized AND the person receiving the voice should explicitly consent. Voice is deeply personal-using someone's voice without permission is a violation.

3. Granular Control

Let users specify exactly when and how kin voices are used. Not everyone wants their partner's voice for every interaction.

4. Consider Intentional Imperfection

Perfect voice cloning might not be the goal. Small markers that signal "this is synthetic" can prevent uncanny valley effects and maintain trust.

5. Plan for Difficult Cases

What happens when relationships end? When people die? When consent is withdrawn? Systems need graceful handling of these scenarios.

The Ethical Landscape

KinVoices sits at the intersection of powerful technology and deep human emotion. As voice synthesis improves, the questions get harder:

Who owns a voice?

Your voice is part of your identity. But is it property? Can it be copied, sold, or inherited?

How do we prevent misuse?

Voice cloning enables compelling deepfakes. How do we ensure this technology is used for connection, not manipulation?

What about the deceased?

Several companies now offer services to "bring back" deceased relatives' voices. Is this healing or harmful? Who decides?

These questions don't have easy answers. But they're crucial to address as the technology matures and becomes widely available.

📚 Personal Reflections: What I Learned

Voice Is Identity

Before this project, I thought of voice as just another interface modality-like touch or gesture. I was wrong.

Voice is deeply entwined with identity, memory, and relationship. Hearing a loved one's voice triggers emotional responses that no other interface can match. This makes voice a powerful tool-and a potentially dangerous one.

Technology Amplifies Everything

The same technology that enables a grandfather's voice to remind his grandkids to do homework also enables manipulative scam calls that sound like trusted family members.

This taught me that powerful technology is never neutral. Every design decision either makes positive uses easier or negative uses harder. We have to be deliberate about which.

Users Know What They Want (And What They Don't)

The users in our study had incredibly nuanced views on when familiar voices were appropriate. They weren't anti-technology-they were pro-thoughtfulness.

This reinforced my belief that the best design comes from deeply understanding user perspectives, not just implementing what's technically possible.

Research Can Surface Hard Questions

KinVoices didn't solve the ethical challenges of voice synthesis. But it surfaced them clearly and gave designers a framework for thinking about them.

Sometimes the most valuable research doesn't provide answers-it helps us ask better questions.

Connection to My Broader Work

KinVoices connects directly to my interest in technology that supports human relationships:

- CLARA uses AI to facilitate human connection in meetings

- Re-Touch uses technology to preserve and reconnect with memories

- Haptic Empathy explores how touch can convey emotion remotely

In all these projects, the core question is the same: How can technology support human connection without replacing it?

KinVoices taught me that voice is one of the most powerful-and most sensitive-channels for connection. Use it wisely.