Cognitive Bridge: The CHI Paper That Grew Out of a Collaboration Frustration

By Tamil Selvan Gunasekaran

When I saw the acceptance for CHI 2026, I did not immediately think about metrics or contribution statements. I thought about how long this idea had been sitting in my head.

Cognitive Bridge came out of a frustration I kept seeing again and again in collaborative work. Two people can be competent, sincere, and fully engaged, and still walk away from the same conversation carrying two different versions of what was decided.

That kind of misalignment sounds small when you say it casually. In practice, it burns time, confidence, and momentum. It creates that horrible moment a few days later when you realize nobody was actually talking about the same thing. I have seen versions of that happen often enough that I stopped treating it as a communication mistake and started treating it as a design problem.

Somewhere in that frustration, the question became impossible for me to ignore: what if AI could help surface the misunderstanding while it was still small, before it hardened into rework?

The Problem Behind the Paper

I have become increasingly obsessed with moments where collaboration looks smooth from the outside but is actually misaligned underneath.

In cross-functional teams, this happens constantly:

- A designer says "keep it intuitive" and a developer hears "there will be hidden technical complexity."

- A developer says "we can do that later" and a designer hears "that detail is not important."

- Everyone agrees in the meeting, but the agreement lives in different mental models.

What makes this difficult, and honestly fascinating, is that the failure is rarely dramatic. Nobody is shouting. Nobody is obviously confused. The breakdown hides inside ordinary language and polite agreement.

That is why this project centered on boundary objects such as diagrams, flowcharts, and shared visual artifacts. I was less interested in AI as a chatbot and more interested in AI as a collaborator that helps teams externalize what they mean.

In the paper, that framing is grounded in work on boundary objects, semantic boundaries, and distributed cognition. The basic intuition is simple but powerful: teams think better when they have shared external representations to reason through together, especially when the people in the room come from different disciplines and carry different assumptions.

What I Actually Built

At the center of Cognitive Bridge is the idea I kept returning to whenever the project became too complicated:

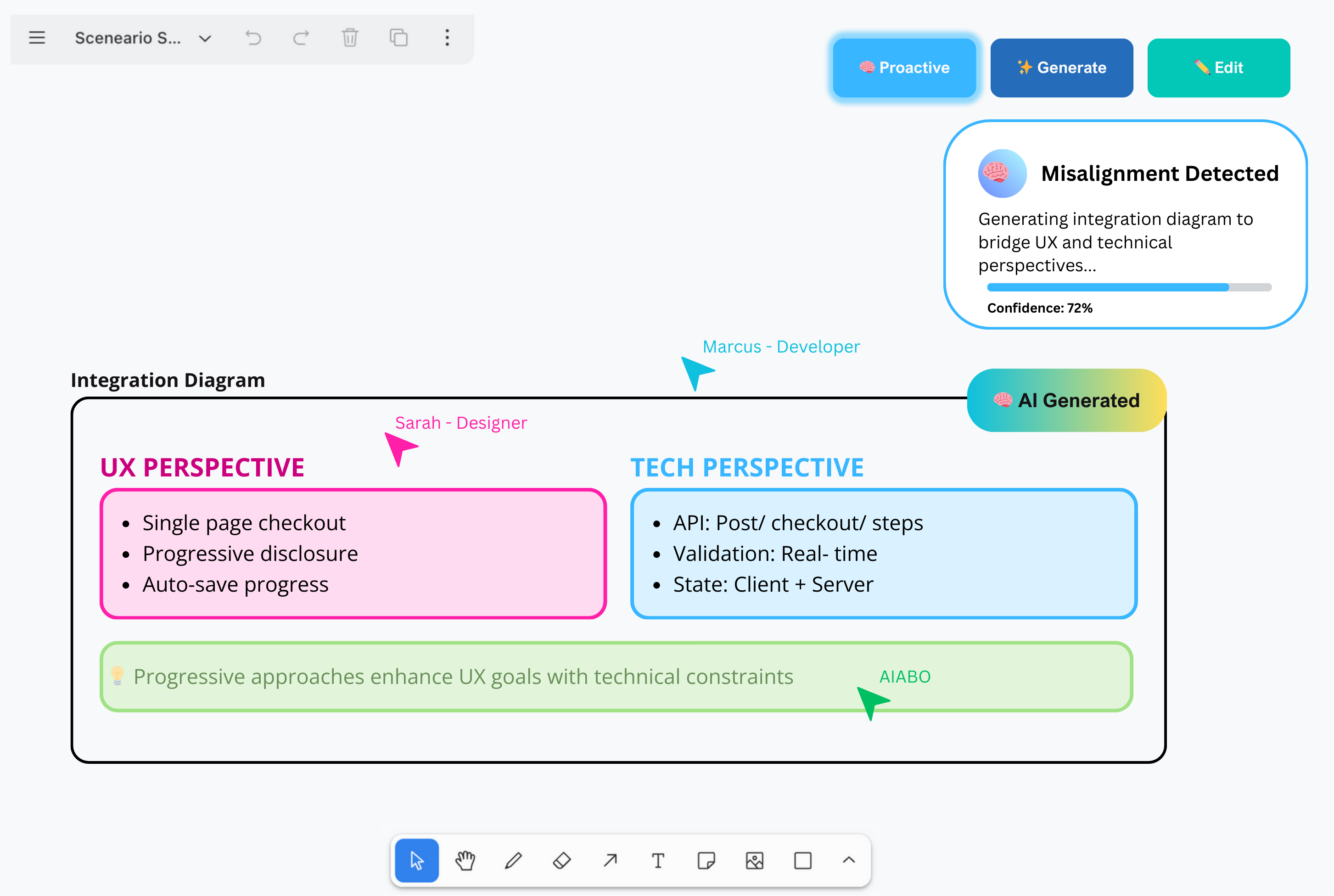

When a designer and developer begin drifting apart semantically, the system should generate a shared artifact that gives them something concrete to negotiate together.

Instead of treating AI as the final decision-maker, I wanted it to act more like a mediator. It detects friction, creates a visual bridge, and then gets out of the way enough for people to edit, challenge, and improve what appears.

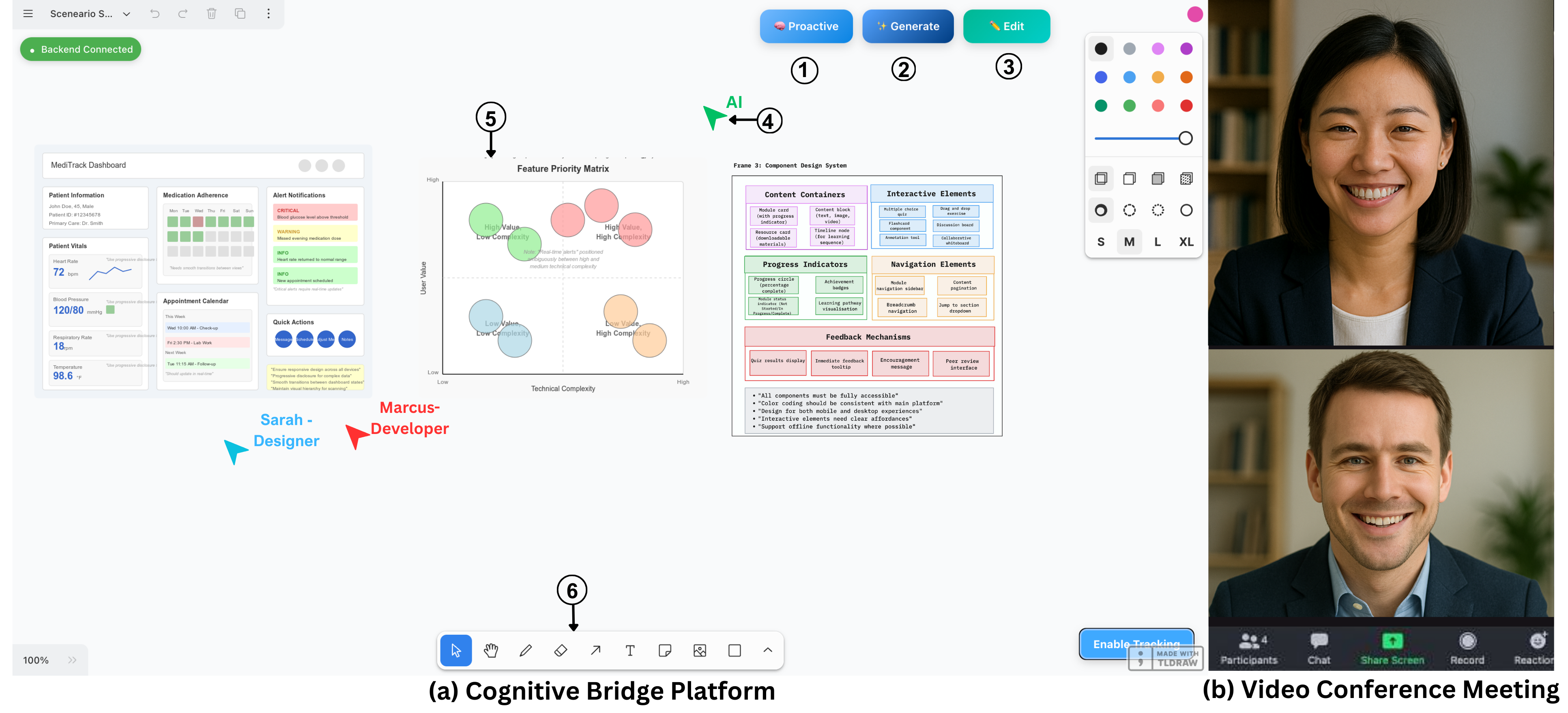

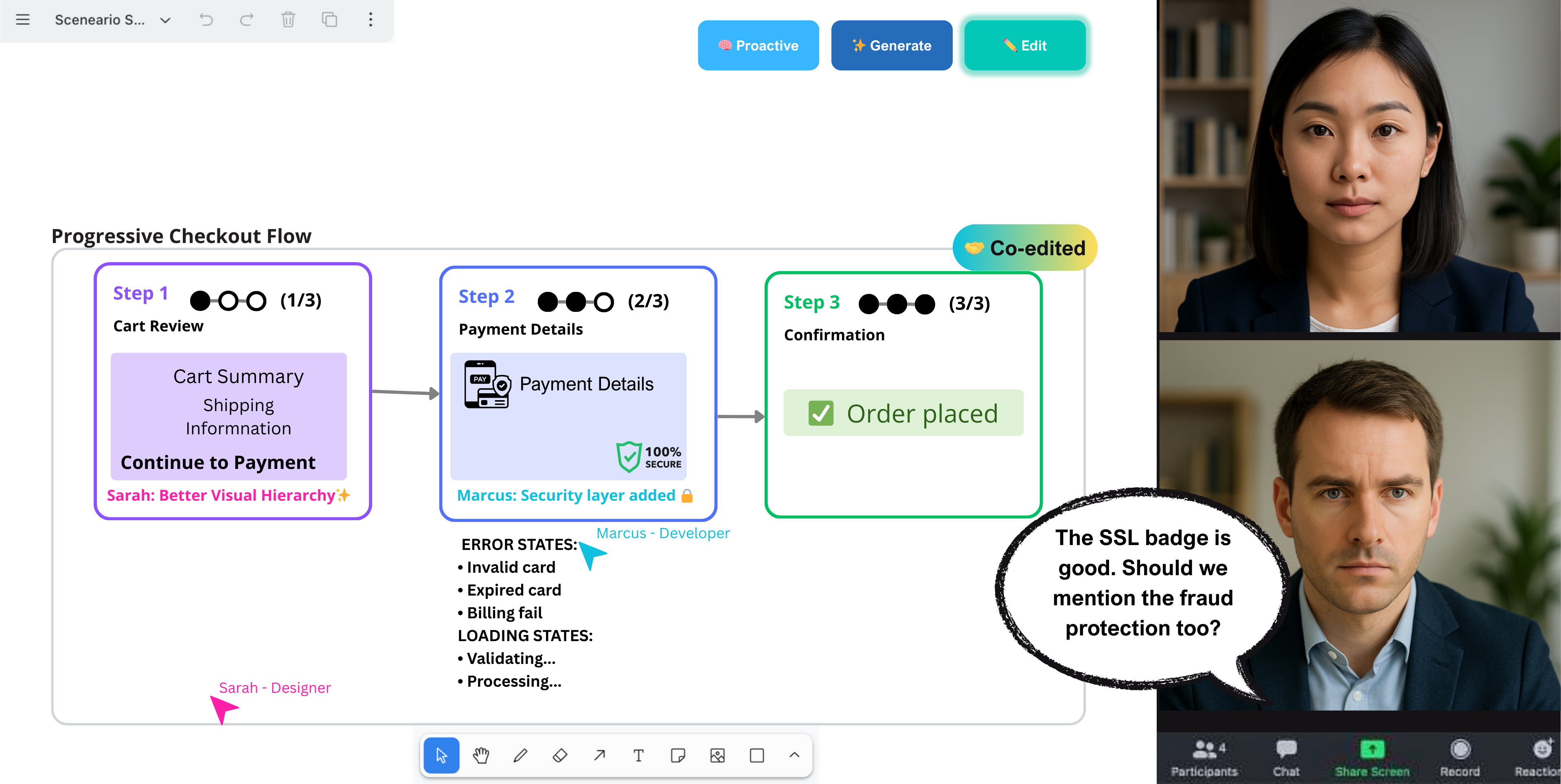

In practice, the system works through three modes:

- Proactive: the system detects misalignment and suggests a bridge automatically

- Generate: users intentionally ask for a comparison, diagram, or flow

- Edit: both collaborators modify the result until it reflects real shared understanding

That last part mattered to me more than I expected. If the artifact arrives and stays untouched, then the AI is quietly taking over the conversation. If it arrives and gets edited, pushed back on, and reshaped, then the humans are still doing the real collaborative work.

Technically, the system monitors a combination of:

- conversation transcripts

- facial expressions

- whiteboard interactions

- project artifacts already present in the workspace

It then decides whether it should intervene, what kind of visual bridge would help, and how that artifact should be shaped so both roles can work on it.

The Moment the Idea Became Real

One of my favorite parts of this project is that the system does not just summarize discussion. It makes discussion visible.

When the system detects that the two sides are pulling in different directions, it can generate an integration view that places UX perspective and technical perspective side by side. That sounds simple, but it changes the quality of the conversation. People stop arguing over vague phrases and start reacting to something inspectable.

That was the emotional center of the work for me. I was never trying to build a system that "wins" the meeting by being cleverer than the people in it. I was trying to build a system that gives the meeting a better object to think with.

That distinction also shaped the design of the study. Before building the final system, we ran a formative study with seven professionals to understand where current designer-developer collaboration breaks down. That helped us identify recurring issues around semantic translation, temporal coordination, and the limits of static artifacts.

Why the Architecture Matters

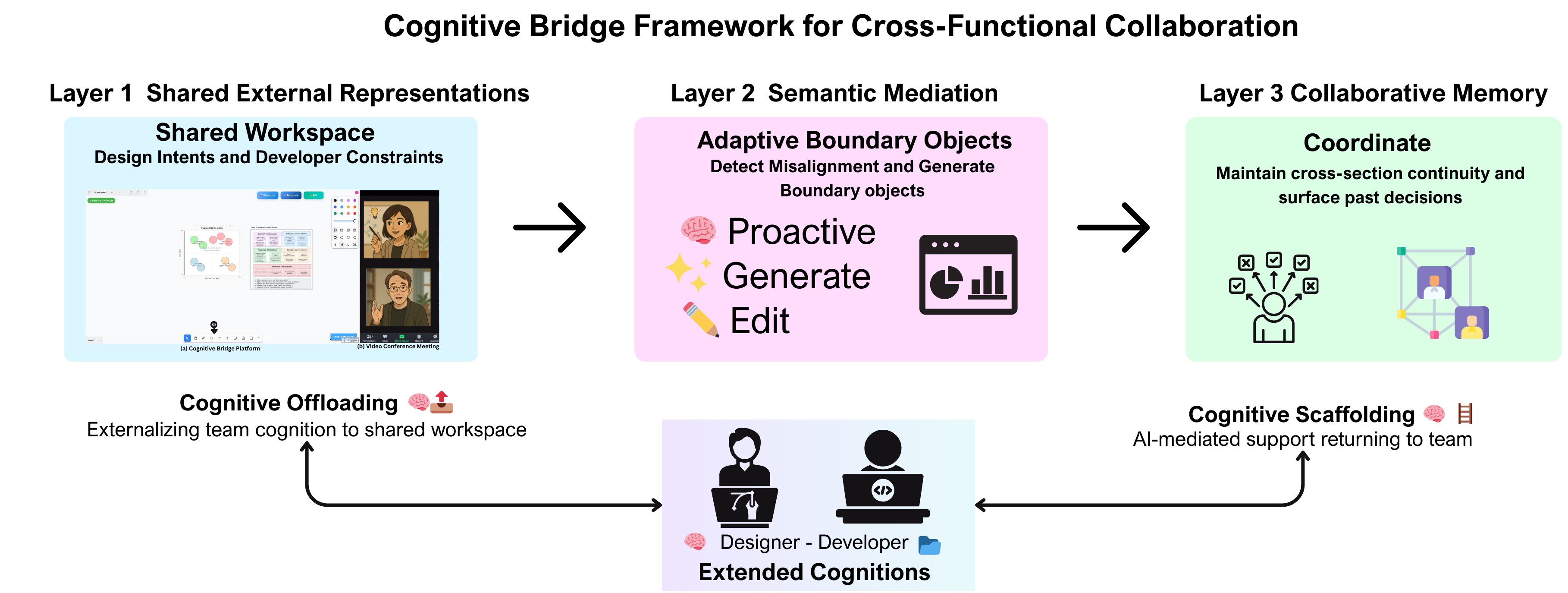

What made this more than a design sketch was the attempt to connect three things at once:

- A shared workspace where design intent and technical constraints live together.

- A mediation layer that detects confusion from multimodal signals.

- A collaborative memory layer that helps teams carry decisions forward rather than rediscover them every time.

That structure helped me think about the difference between cognitive offloading and cognitive scaffolding.

- Offloading means the team gets ideas out of their heads and into a shared workspace.

- Scaffolding means the AI helps them reason through the hard part without replacing their judgment.

That distinction became one of the most important intellectual anchors in the project.

Another part that mattered was collaborative memory. Meetings do not happen in isolation. Teams revisit the same product areas, the same trade-offs, and often the same misunderstandings across sessions. So the system was designed not just to generate an artifact in the moment, but to preserve context that can be revisited later.

What the Study Showed

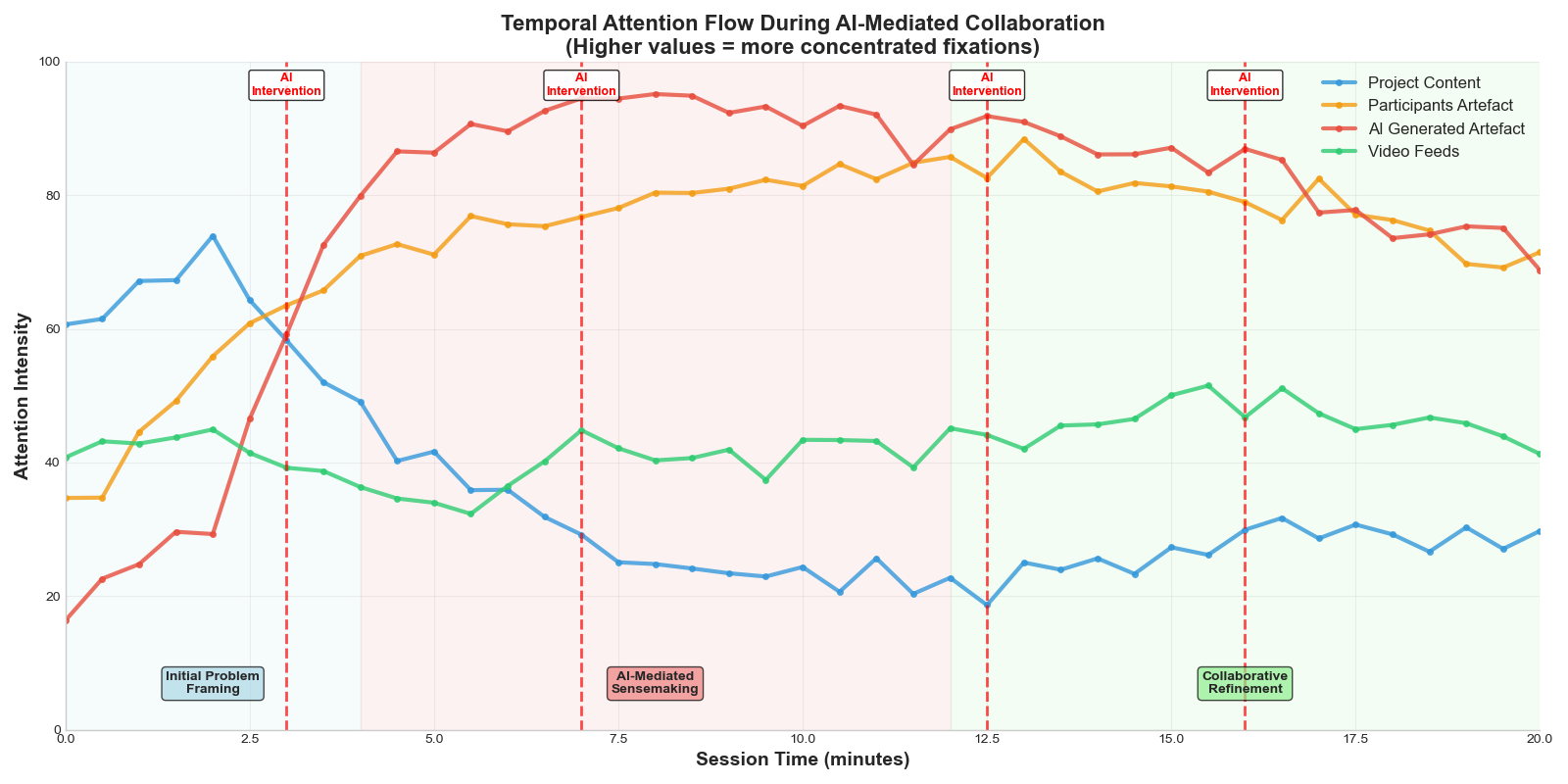

This paper became a full CHI paper because the idea did not stay at the level of taste or intuition. We evaluated it with designer-developer dyads and got results that pushed it from "this feels promising" into "this is doing something measurable."

| Outcome | Result |

|---|---|

| Communication conflicts | 47% lower than the baseline |

| Implementable solutions | 34% higher than the baseline |

| Joint visual attention during interventions | 34% increase |

| AI-generated artifacts edited by teams | 85% |

The 85% number is one of my favorites because it says something deeper than raw performance. It suggests people were not passively consuming AI output. They were correcting it, arguing with it, and making it theirs.

That said, the paper also surfaced a tension I take seriously: AI can accelerate alignment so much that it risks narrowing exploration too early.

That tradeoff matters. Faster agreement is not always better thinking.

This is probably the most important nuance in the whole project. A system can improve efficiency and still damage the deeper quality of collaborative thinking. Some of the participants made it clear that when the AI stepped in too quickly with something polished, it sometimes reduced the productive messiness that real design work needs.

What the Paper Does Not Fully Capture

The published paper gives the formal story. What it cannot fully hold is what the project did to my own thinking while I was building it.

The hardest design problem was not generation quality. It was restraint.

I kept asking myself:

- When should AI intervene, and when should it stay quiet?

- How do you support a team without making them dependent on suggestions?

- How do you make the artifact helpful enough to move discussion forward, but unfinished enough that people still feel ownership?

That is the part of Human-AI Interaction I care about most. I am not interested in AI that dazzles people for two minutes and then leaves them less engaged with each other. I am interested in AI that helps people think together better without quietly taking authorship away from them.

The co-editing mode became important to me for exactly that reason.

There is also a broader academic reason this project matters to me. A lot of AI tooling today is optimized around individual productivity. This paper pushed me to think much more seriously about team cognition and the question of what it means to design AI for negotiation, translation, and shared sensemaking rather than solo acceleration.

Why This CHI Acceptance Means a Lot to Me

Getting a full paper into CHI with this project means a lot to me because it validates a direction I genuinely want to spend years pushing on:

AI should not only generate content. It should help people build shared understanding.

There is also something deeply satisfying about the shape of this contribution. It sits at the intersection of CSCW, HAI, generative interfaces, and very real collaborative pain points. That is exactly the kind of research space I want to inhabit.

For me, Cognitive Bridge is not just a single accepted paper. It is a statement about the kind of systems I want to build:

- systems that are socially aware

- systems that respect expertise on both sides

- systems that improve coordination without flattening human creativity

CHI 2026 is the milestone, but it is not the whole story. The deeper value is that this paper gave me a sharper vocabulary for the kind of questions I want my research life to keep circling:

- How should AI enter collaboration without flattening it?

- How do we design support that helps people coordinate without making them intellectually lazy?

- What does it mean for a system to genuinely protect shared understanding?

That is why this acceptance feels bigger to me than a line on a CV. It feels like one of those rare moments when the paper, the question, and the researcher I am trying to become all line up.

Selected Academic References

Gunasekaran, T. S., Lim, S., Gupta, K., Bai, H., Pai, Y. S., & Billinghurst, M. (2026). Cognitive Bridge: AI-generated boundary objects for cross-functional collaboration. In Proceedings of the 2026 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery. https://doi.org/10.1145/3772318.3791399

Bowker, G. C., Timmermans, S., Clarke, A. E., & Balka, E. (2016). Boundary objects and beyond: Working with Leigh Star. MIT Press.

Carlile, P. R. (2002). A pragmatic view of knowledge and boundaries: Boundary objects in new product development. Organization Science, 13(4), 442-455.

Lee, C. P. (2007). Boundary negotiating artifacts: Unbinding the routine of boundary objects and embracing chaos in collaborative work. Computer Supported Cooperative Work, 16(3), 307-339.

Caccamo, M., Pittino, D., & Tell, F. (2023). Boundary objects, knowledge integration, and innovation management: A systematic review of the literature. Technovation, 122, 102645.

Park, G. W. W., Panda, P., Tankelevitch, L., & Rintel, S. (2024). The CoExplorer technology probe: A generative AI-powered adaptive interface to support intentionality in planning and running video meetings [arXiv preprint].

Simkute, A., Tankelevitch, L., Kewenig, V., Scott, A. E., Sellen, A., & Rintel, S. (2024). Ironies of generative AI: Understanding and mitigating productivity loss in human-AI interactions [arXiv preprint].