Monitoring AI Agents and Self-Optimization: How Production Agent Systems Get Smarter Over Time

Part 2 of 6 | By Tamil Selvan Gunasekaran, AI Agent Developer Intern at Autohive

The Problem With AI Agents Today

One thing hit me early in my internship at Autohive: a lot of teams are deploying agents without really knowing whether those agents are any good.

The pattern is familiar. You wire up a model, add some tools, get a few nice responses in testing, push it out, and then mostly rely on vibes.

Traditional software gives you mature observability. If an API slows down, you know. If a queue backs up, you know. But agents break in a much more annoying way. They can be fully "healthy" from an infrastructure perspective and still be quietly useless. They can respond fast, stay up all day, and still misunderstand the user's intent, call the wrong tool, or produce something no one should trust.

Traditional monitoring tells you if the engine is running. Agent monitoring tells you if the car is driving in the right direction.

Most agent platforms still do not answer the question I actually care about: is this agent improving, or is it just running? For me, that became the difference between a toy and a production system.

What Agent Monitoring Actually Looks Like

I ended up thinking about agent monitoring as four stacked layers. Not because it sounded elegant, but because every time one layer was missing, debugging became painful.

Layer 1: Operational Monitoring

This is the foundation. StatsD, Datadog, CloudWatch — whatever your infrastructure team already uses. You are tracking the basics:

- Timing: How long does each LLM call take? How long does tool execution take?

- Counters: How many requests per minute? How many errors?

- Gauges: Current queue depth. Active connections. Memory usage.

# Pseudocode: operational metrics

metrics.timer("llm.completion.duration", elapsed_ms)

metrics.counter("agent.task.completed")

metrics.counter("agent.task.failed")

metrics.gauge("agent.queue.depth", pending_tasks)

metrics.counter("llm.tokens.used", token_count, tags=["model:gpt-4o"])This layer tells you "is the system healthy?" It is necessary but not sufficient.

Layer 2: Task Monitoring

This is where agent monitoring really starts to break away from traditional APM. You have to track task executions, not just HTTP requests. One conversation can trigger five tool calls, three completions, and two background jobs. If you cannot see that chain, you are mostly guessing.

A well-designed agent platform should expose an admin dashboard that shows:

- Execution status: Running, completed, failed, waiting

- Success rates: What percentage of tasks actually achieve the user's goal?

- Wait times: How long do users wait before the agent responds?

- Retry patterns: Which tasks are failing and being retried?

Datadog will not hand you this. You have to build it yourself.

Layer 3: Background Job Health

Agents do not just respond to users. In any serious agent platform, there are scheduled jobs running constantly — syncing data, processing queues, cleaning up stale sessions, running evaluations. Each of these is a potential point of failure.

You need a dedicated view into:

- Job schedules: When did each job last run? When is the next run?

- Job duration: Is a job that usually takes 10 seconds suddenly taking 5 minutes?

- Job failures: Which jobs are failing silently?

The silent failure problem is especially dangerous with agents. A background job that syncs user context might fail, and the agent keeps running — but now it is missing critical context and giving worse answers. The user sees degraded quality but has no idea why.

Layer 4: Real-time Communication

When you are running agent evaluations or watching an agent handle a complex multi-step task, you need live updates. HTTP polling is not good enough.

Modern agent platforms use WebSockets or SignalR to push real-time progress updates to admin dashboards:

# Pseudocode: real-time eval progress

hub.send("evaluation-progress", {

eval_id: "eval-2026-02-09",

model: "claude-sonnet-4",

test_case: 14,

total_cases: 50,

status: "running",

current_score: 0.87

})This matters especially during evaluation runs, where you might be testing dozens of models against hundreds of test cases. Without real-time updates, you are staring at a loading spinner for twenty minutes.

Core Primitives: Events, Tracing, and Replay

Agent monitoring requires three foundational primitives that most teams overlook.

Structured Event Logs — Every agent action should emit a typed event: ConversationStarted, ToolCallExecuted, LlmCompletionReceived, TaskCompleted. Each event carries a correlation chain: conversation_id → task_execution_id → tool_call_id. This lets you reconstruct exactly what happened during any interaction.

Distributed Tracing — Borrow from OpenTelemetry. Each agent interaction creates a trace with nested spans:

Trace: conversation-9f3a

└─ Span: task-execution (agent: support-bot, prompt_version: v7)

├─ Span: llm-completion (model: claude-sonnet-4, tokens: 850, latency: 1.2s)

├─ Span: tool-call (tool: kb_search, success: true, latency: 340ms)

└─ Span: llm-completion (model: claude-sonnet-4, tokens: 420, latency: 0.8s)Replay — The ability to re-run a conversation with a frozen prompt version and recorded tool outputs. This is invaluable for debugging. When an agent misbehaves, you capture the exact inputs, tool responses, and configuration — then replay it locally to reproduce the issue. Without replay, debugging agents is like debugging a production server without logs.

The difference between traditional APM and agent monitoring: APM tracks request-response cycles. Agent monitoring tracks reasoning chains.

Agent Performance Metrics That Matter

Not all metrics are created equal. When you are running agents in production, there are five categories of metrics that actually matter. Everything else is vanity.

1. Business Impact: Hours Saved

This is the north star metric. Everything else is a supporting detail.

How much human time does the agent save per conversation?

This is not a technical metric. It is the business reason the system exists. If an agent handles a support ticket in 30 seconds that used to take a human 15 minutes, that difference is the story. Everything else supports that.

# Pseudocode: hours saved calculation

hours_saved = (estimated_human_time - actual_agent_time) * conversation_count

hours_saved_per_agent = group_by(agent_id).sum(hours_saved)A good agent platform tracks this at the workspace level, the team level, and the individual agent level. You want to know which agents are delivering the most value — and which ones are not pulling their weight.

2. Conversation Volume

Simple but important. How many conversations is each agent handling? Across which teams? During which hours?

Volume trends tell you adoption stories. If an agent's conversation count drops 40% in a week, something is wrong — maybe the agent's quality degraded, maybe the team found a better workflow, maybe there is a bug. Without tracking volume, you would never notice.

3. Token Usage

LLM tokens are the raw material of agent systems, and they are not free. You need granular tracking:

- Per-model: GPT-4o uses different token counts than Claude or Gemini

- Per-agent: Some agents are chatty, some are concise

- Per-modality: Text tokens vs. image tokens have very different costs

- Input vs. output: Output tokens are typically more expensive

# Pseudocode: token tracking

track_usage({

agent_id: "support-bot-v3",

model: "claude-sonnet-4",

input_tokens: 2400,

output_tokens: 850,

modality: "text",

conversation_id: "conv-9f3a"

})4. Cost Tracking

Token usage feeds directly into cost. But cost tracking is trickier than it sounds because LLM pricing is not uniform — it varies by model, by modality, by tier, and it changes frequently.

A production agent system needs a pricing lookup layer that maps token counts to actual dollar costs:

- Per-conversation cost: What did this single interaction cost?

- Per-agent cost: What does this agent cost to run per day/week/month?

- Cost trends: Is an agent getting more expensive over time? (This usually means the prompts are getting bloated.)

5. Top Performing Agents

Once you have business impact and cost data, you can rank agents. This is where it gets interesting.

Think of it like a sports league table. You want to see:

- Which agents save the most hours?

- Which agents have the best cost-to-value ratio?

- Which agents are improving over time vs. degrading?

Filter by time period — daily, weekly, monthly — and you start to see patterns. Maybe an agent that works great on weekdays falls apart on weekends when it encounters different types of requests.

The metric that matters most is not accuracy — it is business impact. An agent that is 95% accurate but saves zero time is worse than an agent that is 85% accurate but saves 100 hours per week.

Agent Failure Taxonomy

Before you monitor well, you need to get specific about failure. Below is the failure taxonomy I found most useful, with the signal I would watch and the fix I would try first.

| Failure Type | Detection Signal | Metric | Remediation |

|---|---|---|---|

| Hallucinated tool call | Tool name not in available set | tool_error.not_found counter | Tighten system prompt; reduce temperature |

| Wrong tool / wrong args | Tool returns validation error | tool_error.invalid_args rate | Add few-shot examples to prompt; improve tool descriptions |

| Tool timeout / error | HTTP 5xx or timeout from external API | tool_error.timeout rate | Add retry logic; implement circuit breakers |

| Looping / thrashing | Same tool called 3+ times with identical args | agent.loop_detected counter | Add loop detection; cap max tool calls per turn |

| Context loss | Agent asks for information already provided | User re-sends same info; escalation rate spikes | Check context window limits; improve retrieval |

| Safety refusal | Agent refuses action the user needs | agent.safety_refusal counter | Review guardrail thresholds; add approved action allowlists |

Track these failure types as first-class metrics, not just generic error counts. The difference between "5% error rate" and "3% tool timeouts + 2% hallucinated calls" is the difference between knowing something is wrong and knowing how to fix it.

Case Study: Diagnosing a Silent Quality Degradation

This is the kind of scenario that made all of this feel necessary to me:

The setup: A support agent (support-bot-v3) handles 12,000 conversations per week. It uses a calendar integration to schedule meetings and a knowledge base tool to look up product documentation.

The incident: On a Monday morning, the team notices that the "hours saved" metric dropped 35% over the weekend. No alerts fired — uptime was 100%, latency was normal, error rates looked flat.

The investigation: Drilling into the task monitoring layer reveals the problem. The calendar API changed its authentication scheme over the weekend. Tool calls to calendar.create_event were returning 401 errors — but the agent was catching those errors and responding with "I was unable to schedule the meeting, please try again later" instead of escalating. From the operational monitoring layer, everything looked healthy.

| Metric | Before (Friday) | After (Monday) |

|---|---|---|

| ToolSuccessRate | 92% | 71% |

| Avg Cost/Conversation | $0.03 | $0.04 |

| Avg Turns/Conversation | 3.2 | 5.1 |

| Escalation Rate | 8% | 31% |

| Hours Saved/Week | 480 | 312 |

The fix: Rolling back to the previous prompt version (which included explicit instructions to escalate on auth failures) restored tool success rates within minutes. The calendar API integration was updated separately.

The lesson: Without task-level monitoring that tracks tool success rates by tool name, this would have been invisible. The agent was "working" — it just was not working well.

The Evaluation Arena: Where Models Compete

One idea that changed how I think about agent development was treating model selection like a competition.

Inspired by the LMSYS Chatbot Arena (Zheng et al., 2023), the concept of an evaluation arena brings the same competitive benchmarking approach to production agent systems. But where LMSYS focuses on general chatbot quality, an agent evaluation arena tests what actually matters for your specific use cases.

How It Works

The evaluation arena is a structured competition space where multiple LLM models are tested against the same set of test cases. No favoritism, no cherry-picking. Same inputs, same evaluation criteria, different models.

Each test case includes:

- User prompt: The actual input the agent would receive

- Expected output: What a correct response looks like (or key criteria it must satisfy)

- System prompt override: Optionally test with different system prompts

- Tool definitions: For agents that use tools, include the tool schemas

Three Evaluation Modes

Not all evaluations are the same. A well-designed arena supports multiple modes:

Pointwise Evaluation: Rate a single model's output on a scale. "How good is this response from 1 to 10?" Simple, fast, good for initial screening.

Pairwise Evaluation: Show outputs from two models side by side for the same prompt. "Which response is better?" This is the format that LMSYS made famous, and it is remarkably effective at revealing subtle quality differences.

Agentic Evaluation: This is where it gets specific to agent systems. You are not just testing text output — you are testing behaviors. Did the agent call the right tool? Did it pass the correct parameters? Did it handle the multi-step workflow correctly?

# Pseudocode: agentic evaluation test case

test_case = {

category: "ToolUse",

prompt: "Schedule a meeting with John for next Tuesday at 2pm",

expected_tool_calls: [

{ tool: "calendar.check_availability", args: { date: "next_tuesday", time: "14:00" } },

{ tool: "calendar.create_event", args: { attendee: "john", ... } }

],

scoring: {

correct_tool_selection: 0.4,

correct_parameters: 0.3,

correct_sequence: 0.2,

response_quality: 0.1

}

}Test Categories

Organize test cases into categories that reflect how agents are actually used:

| Category | What It Tests |

|---|---|

| General | Basic conversational quality, helpfulness |

| Reasoning | Multi-step logic, deduction, problem-solving |

| Coding | Code generation, debugging, explanation |

| ToolUse | Correct tool selection and parameter passing |

| Creative | Writing, brainstorming, content generation |

| DataExtraction | Pulling structured data from unstructured text |

Batch Evaluation

The real power comes from running all models against all test cases in a batch. In a single evaluation run, you might test 8 models across 50 test cases — that is 400 individual evaluations. With real-time progress updates streaming to your dashboard, you can watch the leaderboard shift as results come in.

The output is a leaderboard: models ranked by aggregate score, broken down by category. You might discover that Model A dominates at reasoning but struggles with tool use, while Model B is mediocre at everything but exceptionally good at data extraction. This information is gold when you are deciding which model to assign to which agent.

Agent Self-Optimization: The Feedback Loop

Monitoring tells you how your agents are doing. Evaluation tells you how your models compare. But self-optimization is where the system starts to improve itself.

Think of it like a sports coach reviewing game tapes. You watch the footage (monitoring), you compare players (evaluation), and then you adjust the game plan (optimization). The best teams do this continuously — not once a quarter.

Prompt Versioning

The system prompt is the DNA of an agent. Change it, and you change everything about how the agent behaves. Which means you need to treat prompt changes with the same rigor that you treat code changes.

Prompt versioning means creating an immutable snapshot every time an agent's configuration changes. Not just the system prompt text — the entire configuration:

- System prompt content (hashed with SHA256 for integrity)

- Selected model

- Temperature, topP, topK parameters

- Available tools and their schemas

- Any guardrails or constraints

# Pseudocode: prompt version snapshot

version = {

version_id: "v7",

agent_id: "support-bot",

created_at: "2026-02-09T14:30:00Z",

prompt_hash: "a3f2b8c1...", # SHA256

prompt_text: "You are a helpful support agent...",

model: "claude-sonnet-4",

temperature: 0.7,

tools: ["kb_search", "ticket_create", "escalate"],

performance_score: null # filled in after evaluation

}Every version is stored. Every version can be compared. Every version can be reverted. Without that, the rest of the self-optimization story gets very shaky.

Optimization Runs

An optimization run is a developer-initiated session where you deliberately test an agent under controlled conditions. You pick a set of test inputs, run them against the current configuration, then tweak the configuration and run them again.

The workflow looks like this:

- Select an agent

- Choose or create a set of test inputs (real user conversations work best)

- Run the agent against those inputs with the current configuration

- Review the results — where did it do well? Where did it fail?

- Adjust the prompt, model, or parameters

- Run again

- Compare results side by side

This is manual, deliberate optimization. It is not automated because the judgment calls ("is this response actually better?") still require human expertise. But the infrastructure — versioning, comparison, scoring — should make each iteration take minutes, not hours.

Model Recommendations

Once you have enough evaluation data, patterns emerge. The system can start making recommendations:

- "Based on evaluation results, claude-sonnet-4 scores 23% higher than gpt-4o for your ToolUse test cases."

- "Your support agent's cost would drop 40% by switching to gemini-2.5-flash with only a 3% decrease in quality scores."

- "For your data extraction agent, gpt-4o outperforms all other models by a significant margin."

These recommendations come from the evaluation arena data. No guesswork — just evidence.

Regression Gates and Safe Rollout

Optimization without guardrails is just gambling. Every prompt change needs a release process:

- Candidate prompt — Make your change, version it

- Eval suite — Run the full test suite against the candidate

- Regression gates — The candidate must pass hard thresholds:

- ToolSuccessRate must not drop below 85%

- Cost per conversation must not increase by more than 20%

- No new safety refusals on the existing test suite

- Canary rollout (5%) — Route 5% of traffic to the new version, monitor for 24 hours

- Full rollout — If canary metrics hold, promote to 100%

Prompt bloat control is a related concern. As you iterate, prompts tend to grow — each fix adds a new instruction, a new edge case, a new example. Set a token budget for system prompts and enforce it. Diff your prompts between versions. If a prompt grows 40% in a month, something is wrong.

A/B Testing

Sometimes you want to compare two specific prompt versions on the same test cases. A/B testing for agents works like A/B testing for web pages, but instead of measuring click-through rates, you are measuring response quality, tool calling accuracy, and business impact.

# Pseudocode: A/B test setup

ab_test = {

agent: "support-bot",

variant_a: "prompt-v6", # current production prompt

variant_b: "prompt-v7", # candidate with refined instructions

test_cases: load("support-bot-test-suite"),

metrics: ["quality_score", "tool_accuracy", "response_length", "cost"]

}

results = run_ab_test(ab_test)

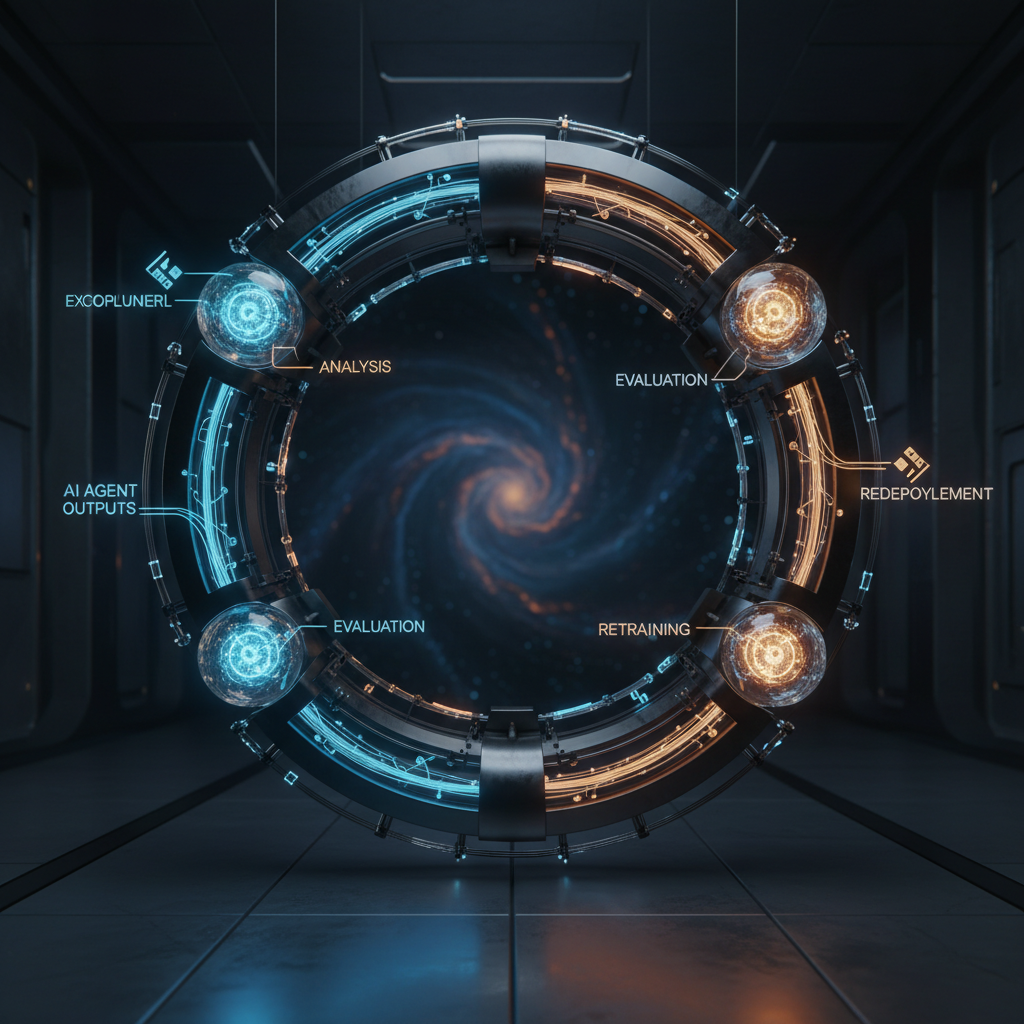

# Output: side-by-side comparison with statistical significanceThe Optimization Loop

Put it all together and you get the optimization loop — a continuous cycle that makes agents better over time:

- Deploy — Ship the agent with a versioned configuration

- Monitor — Track performance in production (hours saved, error rates, tool success)

- Evaluate — Run structured tests against new models and configurations

- Optimize — Improve prompt, model, or parameters based on evidence

- Deploy again — Close the loop

I stopped caring about a "perfect" day-one agent. I cared about whether day ninety was visibly better than day one.

The loop speed matters. If a full optimization cycle takes a week, you improve monthly. If it takes an hour, you improve daily. The infrastructure you build around monitoring and evaluation directly determines how fast your agents can learn.

Academic Context: What Research Says

The ideas behind agent monitoring and self-optimization are not just engineering intuitions — there is a growing body of research that supports and extends them.

AgentMonitor: Proactive Monitoring for Multi-Agent Systems

Chi et al. (2024) introduced AgentMonitor, a plug-and-play framework for monitoring multi-agent systems (arxiv.org/abs/2408.14972). The key contribution is the concept of personal agent scores and collective agent scores — the idea that you need to monitor both individual agent performance and how agents perform as a team.

Their framework proactively detects anomalous agent behavior before it causes downstream failures. This is a step beyond reactive monitoring (catching errors after they happen) toward predictive monitoring (catching problems before users notice them).

Monitoring Teams of AI Agents

Research published in the Journal of AI Research (2025) examines the problem of optimal team size and incentive design for teams of AI agents. The findings suggest that larger teams of agents are not always better — there is a sweet spot where adding more agents actually degrades collective performance due to coordination overhead.

This has practical implications for anyone building multi-agent systems. More agents is not the answer. Better-monitored, better-optimized agents are.

Self-Optimizing Agents

Research from teams working on LLM observability platforms (including work from Comet/Opik) has explored the concept of self-optimizing agents — agents that can systematically improve their own performance through three levers:

- Prompt optimization: Iteratively refining the system prompt based on evaluation results

- Tool optimization: Adjusting which tools are available and how they are described

- Model parameter optimization: Tuning temperature, sampling parameters, and context window usage

The idea I keep coming back to from all this work is simple: you cannot optimize what you do not measure well. Once the evaluation layer is solid, the path toward optimization stops feeling magical and starts feeling mechanical.

Key Takeaways

- Agent monitoring is not traditional APM. You need four layers: operational, task, background job, and real-time. Missing any one of them leaves a blind spot.

- Hours saved is your north star metric. Token usage, latency, and accuracy all matter — but they are means to an end. The end is measurable business impact.

- Treat model selection as a competition. Build an evaluation arena, run structured tests, and let the data tell you which model works best for each use case.

- Version everything. Prompt text, model selection, parameters, tool configs — all of it. If you cannot revert to last Tuesday's configuration in thirty seconds, your versioning is not good enough.

- Optimization is a loop, not a one-time event. Deploy, monitor, evaluate, improve, repeat. The speed of this loop determines how fast your agents get better.

- Research backs this up. Proactive monitoring, team-level scoring, and systematic prompt optimization are all active areas of academic research — and they are all practical today.

The teams that win at AI agents will not be the ones with the best models. They will be the ones with the best feedback loops.

Implementation Checklist

If you are building agent monitoring next week, start here:

- [ ] Add correlation IDs to every agent interaction (

conversation_id,task_id,tool_call_id) - [ ] Track tool success rates by tool name, not just aggregate error rates

- [ ] Implement prompt version snapshots — every config change creates an immutable version

- [ ] Build a task execution dashboard showing status, duration, and retry patterns

- [ ] Set up alerts on the failure taxonomy: hallucinated calls, loops, context loss

- [ ] Measure hours saved per agent per week — this is your north star

- [ ] Create a regression gate checklist for prompt changes before rollout

This is Part 2 of the AI Agent Systems series.

- Part 1: Autohive — The AI Hub of Agents

- Part 3: How to Build an LLM Evaluation System

- Part 4: The Human Side of Agentic Systems

- Part 5: My Experience as an AI Agent Developer Intern

- Part 6: Building Multi-Agent Creative Systems

Visual Gallery