A Smart Desk That Knows What You Put On It

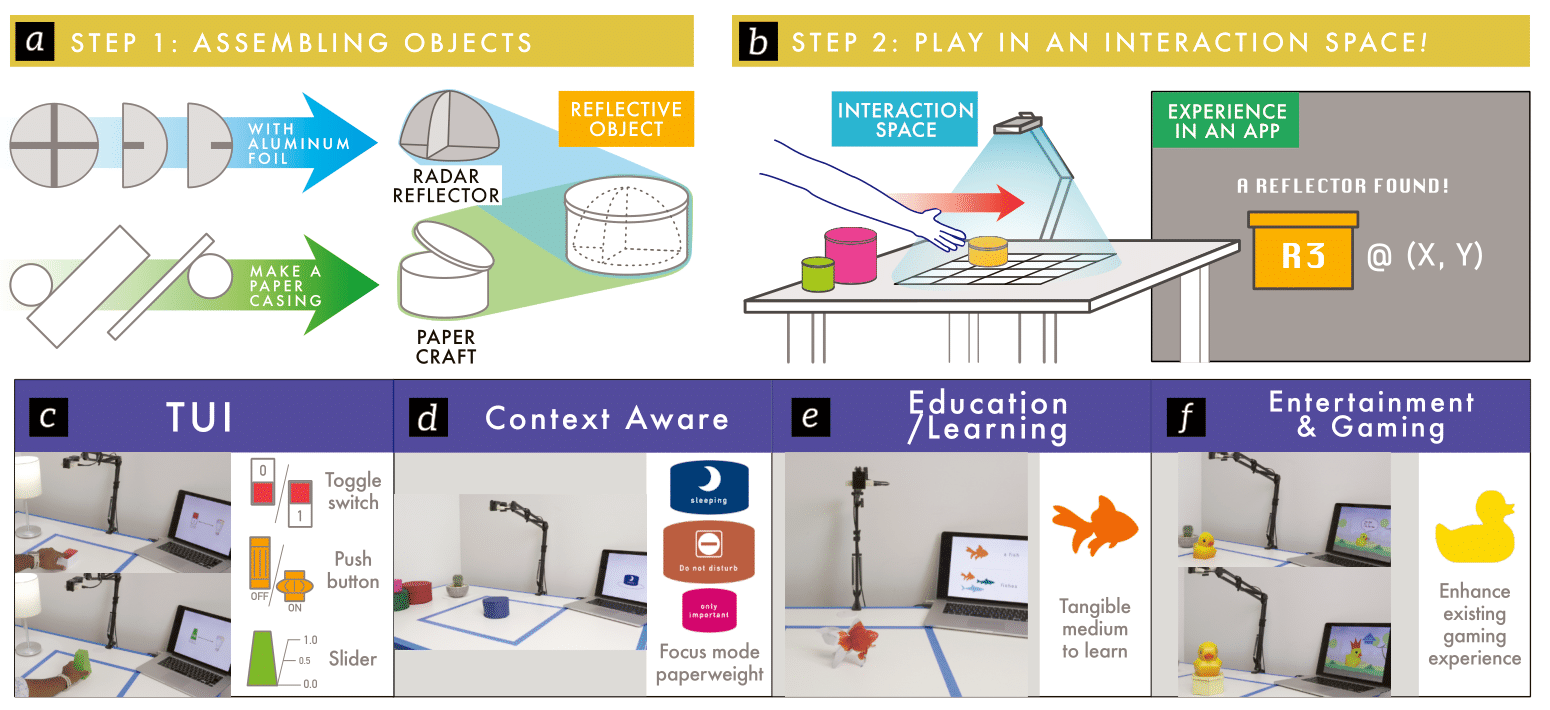

In simple terms: RaITIn uses radar (like in your car's parking sensors) to identify objects on a table. Instead of cameras that watch you or barcodes you have to scan, we embed tiny, cheap radar reflectors into objects. The desk "sees" what you place on it and can respond-your phone could know you're about to take notes when you put your notebook down, or your music could change when you pick up your coffee mug.

🎯 Key Takeaways

- >95% accuracy identifying objects in real-time using low-cost radar

- Stackable IDs - combining objects creates new unique identifiers for more interaction possibilities

- Privacy-preserving - radar doesn't capture images, just shapes and reflections

- Cheap and scalable - radar reflectors cost pennies and require no power

- Part of Google ATAP collaboration - building on Project Soli miniature radar technology

Rethinking Radar for Everyday Objects

Radar is primarily used for large-scale applications-tracking aircraft, ships, weather systems. But what if we could use the same technology for something much more intimate?

What if radar could tell you which coffee mug is yours among identical-looking ones? What if it could detect not just that something is on your desk, but what that something is?

This question led to RaITIn-my first publication in radar-based interaction and the foundation for much of my later work.

How RaITIn Works

RaITIn uses Frequency Modulated Continuous Wave (FMCW) radar-the same technology in car collision detection systems-combined with low-cost radar reflectors embedded in everyday objects.

The Key Components

1. Radar Reflectors

Small, passive metal elements that create unique radar signatures. Think of them as "radar barcodes"-but they:

- Cost just pennies each

- Require no power (completely passive)

- Can be hidden inside objects (invisible to users)

- Work through non-metallic materials

2. Signal Processing Pipeline

Custom algorithms distinguish objects based on their reflection patterns:

- Range profile (how far away?)

- Velocity information (is it moving?)

- Signal strength profile (how much energy comes back?)

3. Machine Learning Classification

An SVM (Support Vector Machine) classifier learns to map radar signatures to object identities. Once trained, it can identify objects in real-time with >95% accuracy.

The Stackable IDs Innovation

One of our most exciting contributions is Stackable IDs. When you stack or combine multiple objects, their radar signatures combine in predictable ways. This means:

- Stacking a book on a tablet creates a different signature than either alone

- Combining tools can trigger context-specific actions

- Layering objects exponentially increases interaction possibilities

A simple example: placing your coffee mug on your coaster triggers "relaxation mode." But placing your notebook on your tablet triggers "work mode." The combinations create a rich vocabulary from simple physical actions.

Why Not Just Use Cameras?

It's a fair question. Computer vision has made incredible advances, and cameras are cheap. Why use radar?

Privacy

Cameras capture identifiable images. A smart desk with cameras is always watching you. Radar doesn't capture images-it sees shapes and reflections, but not faces, text, or details. For a system that's always on, this matters.

Reliability

Cameras struggle with:

- Identical-looking objects (which mug is mine?)

- Poor lighting

- Objects that occlude each other

Radar identifies objects by their embedded reflectors, not their appearance. Two identical mugs can have different radar signatures.

No Line-of-Sight Required

Radar works through non-metallic materials. The reflectors can be embedded inside objects, completely invisible to users.

Real-World Applications

RaITIn enables scenarios that cameras struggle with:

Smart Workspaces

Your desk recognizes your tools and adapts:

- Place your notebook → your note-taking app opens

- Set down your tablet → relevant documents appear on screen

- Stack your books → your calendar clears for "deep work" mode

Accessible Interfaces

For users with visual impairments:

- Objects announce themselves when placed on the desk

- Audio feedback confirms which object you're holding

- No need to scan barcodes or interact with screens

Creative Tools

Physical objects become programmable inputs:

- Placing colored tokens adjusts parameters in creative software

- Moving tangible markers controls visualizations

- Physical prototypes communicate their identity to digital systems

Gaming and Play

Tangible game pieces with digital capabilities:

- Board game pieces that track their position automatically

- Card games where physical cards have digital effects

- Tabletop RPGs with automatic rule enforcement

The Technical Deep Dive

For those interested in the engineering:

FMCW Radar Basics

FMCW radar continuously transmits a signal that sweeps through frequencies. When this signal reflects off objects, the frequency shift tells us:

- Distance: How far away is the object?

- Velocity: Is it moving toward or away from us?

- Reflection strength: How much energy bounces back?

Reflector Design

Our radar reflectors are carefully designed metal shapes that create distinctive reflection patterns. Key design considerations:

- Size: Large enough to produce strong reflections, small enough to embed in objects

- Shape: Different shapes create different signatures (like fingerprints)

- Orientation: Reflectors work across a range of angles

Classification Pipeline

- Data Collection: Record radar signals with objects at various positions

- Feature Extraction: Compute signal features (range, Doppler, intensity)

- Training: SVM classifier learns object signatures from labeled data

- Real-time Classification: New signals are classified in <100ms

This Was Just the Beginning

RaITIn was my first publication at CHI (the premier HCI conference) and my introduction to radar-based interaction. More importantly, it laid the foundation for everything that followed.

The work directly led to:

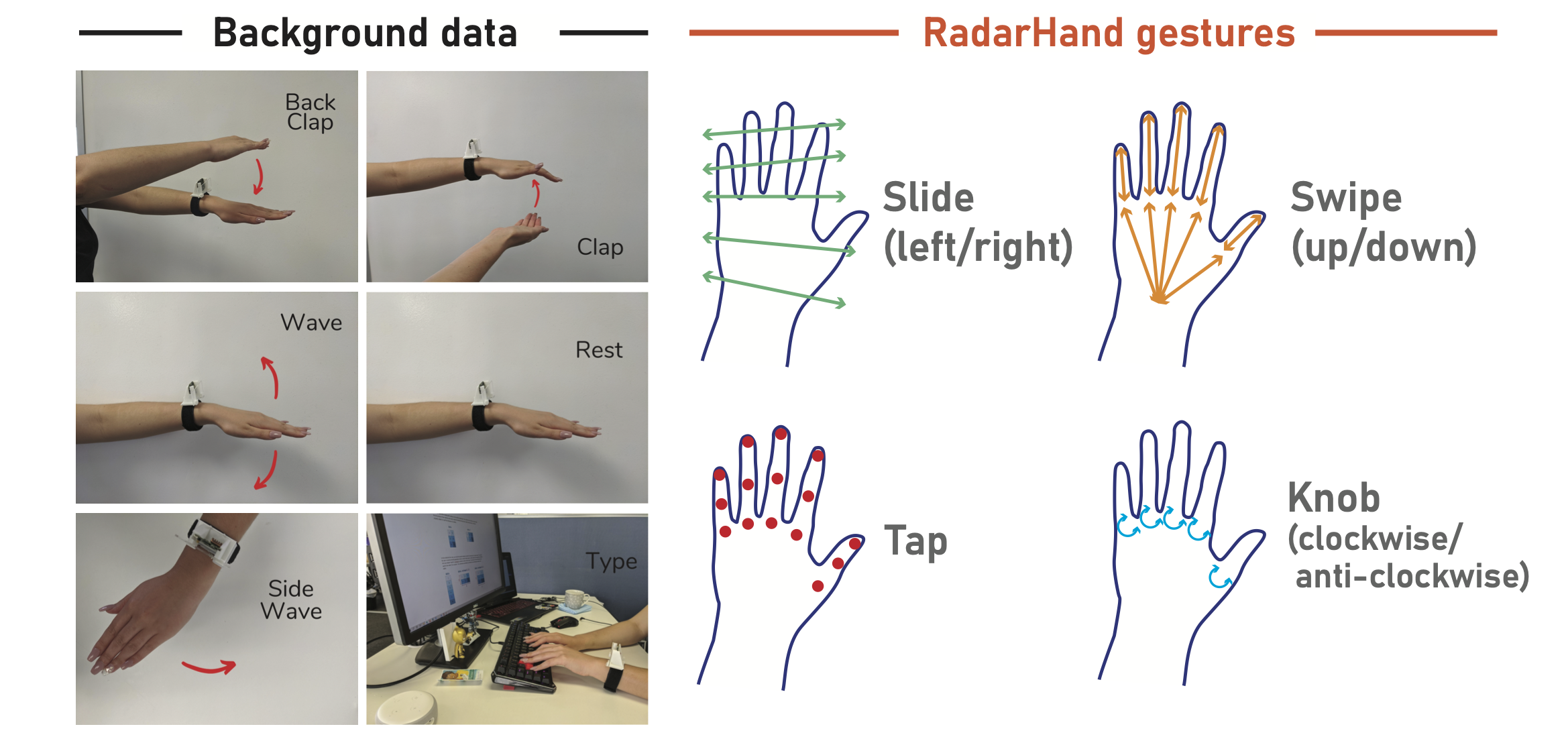

- RadarHand: Applying similar techniques to gesture recognition on the body

- VRTwitch: Using radar to detect micro-gestures in VR

- Fitts' Law study: Understanding how body awareness affects radar-detected gestures

The core insight-that radar can enable new interaction modalities while preserving privacy-continues to drive my research.

📚 Personal Reflections: What I Learned

Technology Should Be Invisible

RaITIn works best when you forget it's there. The magic isn't in the radar-it's in the seamless connection between physical objects and digital responses. This taught me that the best technology disappears into the experience.

Simple Ideas Can Be Powerful

The core idea of RaITIn is simple: put radar reflectors in objects, use radar to identify them. But simple ideas, executed well, can enable entirely new ways of interacting with computers.

Industry Collaboration Accelerates Research

Working with Google ATAP gave us access to state-of-the-art miniature radar hardware (from Project Soli) and engineering expertise. Academic research combined with industry resources can achieve things neither could alone.

Foundations Matter

RaITIn wasn't my most cited or most impactful paper. But it was foundational. The technical skills, research intuitions, and collaboration networks I developed here enabled everything that came after.

Sometimes the most important projects aren't the flashiest-they're the ones that prepare you for what's next.

The Legacy

RaITIn introduced me to a research direction that has defined my career. Radar-based interaction. Privacy-preserving sensing. Tangible interfaces. Natural interaction modalities.

The questions I first asked in this project-How can sensing work with human behavior? How can we preserve privacy while enabling rich interaction? How can physical and digital worlds seamlessly connect?-are questions I'm still pursuing.

And it all started with asking: what if we made radar smaller?