C-A2Meet: The CHI Poster That Asked Why Meeting Interfaces Stay So Rigid

By Tamil Selvan Gunasekaran

C-A2Meet came out of a very specific irritation I had with the current wave of AI meeting tools. Platforms kept adding assistants, summaries, recaps, and suggestions, but the interface itself still felt rigid, managerial, and strangely indifferent to how meetings actually unfold.

That contradiction stayed with me.

People do not stay in one role during a meeting. They join late. They get distracted. They suddenly become facilitator. They want private support without announcing to everyone that they are lost. But most tools still assume a fixed layout, a fixed flow, and a fixed way of receiving help.

This CHI 2026 poster grew out of that discomfort more than anything else.

The Thought I Kept Coming Back To

I kept asking a question that became embarrassingly obvious once I said it aloud:

Why does the meeting keep changing while the interface stays still?

That question reframed the whole project for me.

Most AI meeting tools are built like add-ons:

- a sidebar for summaries

- a panel for action items

- a transcript tab

- a little assistant icon somewhere in the corner

Useful, sometimes. But still rigid, and sometimes socially clumsy.

What I wanted instead was a system where AI-generated components behaved more like living interface material. Something you could pull closer when you need it, hide when you do not, and reshape based on the role you are performing right now, not the role a product manager assumed before the meeting started.

That is really the heart of the project: malleability. Not endless customization buried in settings menus, but interfaces that can be reshaped directly at the point of use.

What C-A2Meet Proposes

C-A2Meet is a malleable, role-aware AI meeting interface.

That means the AI does not just produce content. It produces interface surfaces that can adapt to context and can still be manipulated by the user.

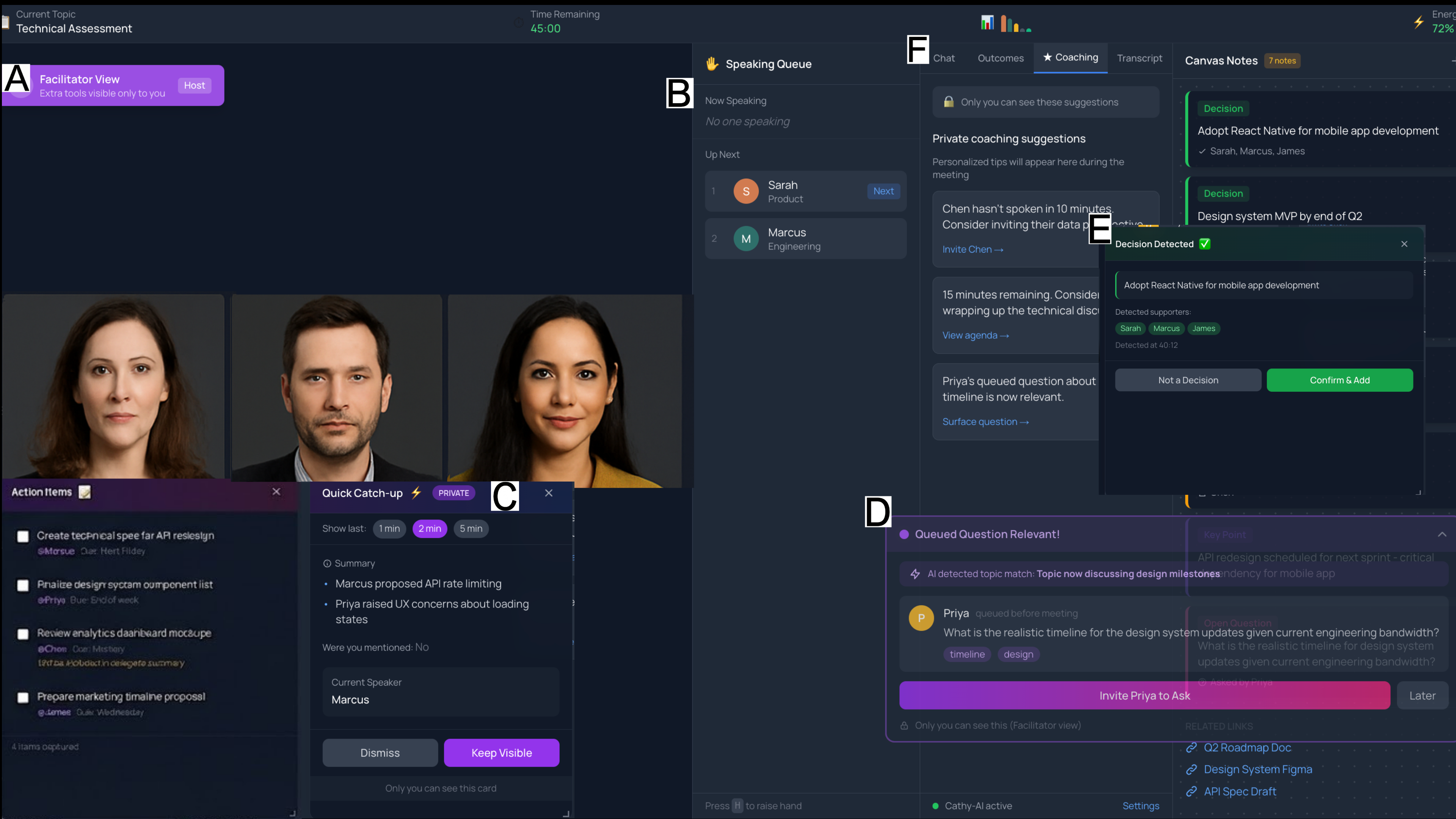

The core pieces in the prototype are:

- Focus Anchors for glanceable awareness like time remaining, topic, and speaking queue

- Catch-Up Cards for private, low-friction context recovery

- Async Contribution Channels so ideas raised outside the live moment can surface when they become relevant

- Role-aware views so facilitators, participants, and presenters are not forced into the same interface

What I like about this framing is that it treats AI as part of the interface grammar, not just a service layer sitting behind a chatbot window. That felt like a more honest way of designing for meetings.

In the actual prototype, these surfaces could be dragged, rearranged, and persisted. Some were private to the user. Some were facilitator-only. Some were shared. That distinction was essential because not every helpful intervention should be public.

The Human Problem Underneath It

The poster is about interface design, but the actual problem underneath it is emotional.

There is a quiet social cost to being lost in a meeting, and I do not think product design takes that seriously enough.

People often do not ask:

- "What did I miss?"

- "Can you repeat the last two minutes?"

- "I zoned out, can someone rewind the discussion?"

Not because they do not need help, but because asking publicly can feel awkward, incompetent, or disruptive.

That is why privacy became central in this work. I did not want AI meeting support to become another way of exposing vulnerability. I wanted it to create stigma-free recovery paths.

The catch-up card idea came from that instinct. If the system can privately help someone recover context, then the tool is not just efficient. It is humane. That distinction matters to me.

That instinct was strongly reinforced by the formative study. We worked with 14 professionals across facilitation, coaching, and UX-related roles, and a lot of what they described was not about flashy AI features. It was about awkwardness, attention drift, privacy, and the invisible work of staying oriented in meetings.

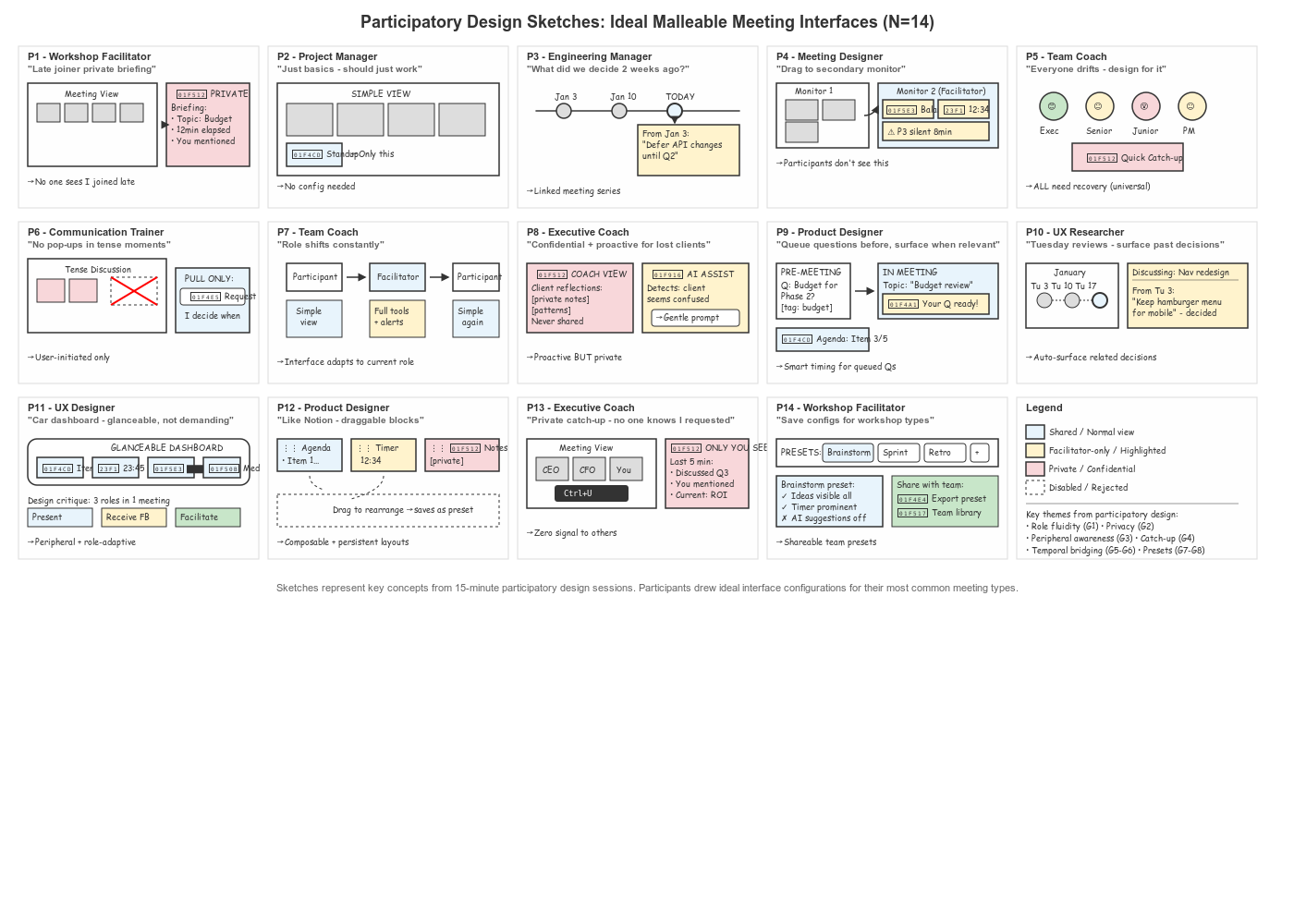

The Participatory Sketches Were a Big Clue

One of the most revealing parts of this project was seeing how differently people imagined their ideal meeting support.

Some wanted a quiet dashboard. Some wanted stronger facilitator controls. Some wanted pull-only help. Others wanted AI to surface relevant questions or past decisions at exactly the right moment.

That finding pushed me further away from the idea that there is a single best AI meeting interface.

Instead, the more honest design stance seemed to be:

let the system generate useful components, but let people decide how those components live on the screen.

This is where the project started to feel less like a feature list and more like a broader interaction design argument about agency.

Some of the concrete findings were especially striking:

- 50% described mid-meeting role transitions, which led to the concept of role fluidity

- 86% emphasized the importance of privacy boundaries

- 64% described needing in-meeting context recovery after attention gaps

- 43% described needing continuity across recurring meeting series, which became the idea of cross-meeting memory

Those numbers helped clarify that the problem was not just meeting assistance. It was the mismatch between static interfaces and fluid collaborative reality.

What the Poster Contributes

Because this is a CHI poster, the contribution is intentionally formative. It is not pretending to be the final answer, and I actually like that about it.

The poster contributes three things that feel important to me:

- A vocabulary for role fluidity

People are not permanently "host" or "participant" in any meaningful cognitive sense. Their role shifts throughout the meeting.

- A stronger framing for private support

Some of the most valuable AI help in meetings should be invisible to everyone except the person who needs it.

- A case for malleability

If AI can generate interface surfaces, the interface should remain shapeable at the point of use rather than frozen into a static design.

The project also distilled these observations into design guidelines, which I see as an opening for future systems rather than a closed recipe.

At a higher level, the eight guidelines in the paper push toward a few core commitments:

- interfaces should adapt when roles shift

- private recovery should be available without stigma

- meeting support should span across sessions rather than treating every meeting as isolated

- AI help should support both push and pull interaction modes

- users should be able to recompose the interface rather than passively accept one layout

What I Personally Like About This Work

This poster captures a research instinct I trust.

I am skeptical of AI systems that act as if every collaborative problem can be solved by better summarization or more automation. Meetings are messy because people are messy. Good support often comes from tiny things:

- a reminder placed in the periphery

- a private catch-up moment

- a surfaced question at the right time

- a layout that changes with the rhythm of the room

Those details are easy to dismiss as interface polish, but I think they are where a lot of meaningful Human-AI design lives.

What I am proud of here is not that the system looks futuristic. It is that it takes something ordinary and under-designed, the meeting interface itself, and asks whether it deserves to be more responsive to human reality.

Why This CHI Acceptance Matters to Me

I am proud of this acceptance because it gives shape to a design space I genuinely want to keep exploring: AI-generated interfaces that stay under human control while adapting to real social context.

It also reflects something personal about how I want to work as a researcher. I do not want to build AI systems that only look impressive in demos. I want to build systems that notice the fragile parts of everyday collaboration and treat them with care.

That is why I am happy this poster got into CHI. Not because it is "just" another acceptance, but because it points toward the kind of research voice I want to keep developing.

Selected Academic References

Gunasekaran, T. S., Feng, Y., Bai, H., Pai, Y. S., & Billinghurst, M. (2026). C-A2Meet: Malleable, role-aware AI interfaces for video conferencing. In Extended Abstracts of the 2026 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery. https://doi.org/10.1145/3772363.3798532

Cao, Y., Jiang, P., & Xia, H. (2025). Generative and malleable user interfaces with generative and evolving task-driven data model. In Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery. https://doi.org/10.1145/3706598.3713285

Mackay, W. E. (1990). Users and customizable software: A co-adaptive phenomenon. In Proceedings of the 1990 ACM Conference on Computer-Supported Cooperative Work (pp. 217-228). Association for Computing Machinery. https://doi.org/10.1145/99332.99353

Amershi, S., Weld, D., Vorvoreanu, M., Fourney, A., Nushi, B., Collisson, P., Suh, J., Iqbal, S., Bennett, P. N., Inkpen, K., Teevan, J., Kikin-Gil, R., & Horvitz, E. (2019). Guidelines for human-AI interaction. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery. https://doi.org/10.1145/3290605.3300233

Houtti, M., Zhou, M., Terveen, L., & Chancellor, S. (2025). Observe, ask, intervene: Designing AI agents for more inclusive meetings. In Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery. https://doi.org/10.1145/3706598.3713838

Lee, G., Yang, Y., Healey, J., & Manocha, D. (2025). Since U Been Gone: Augmenting context-aware transcriptions for re-engaging in immersive VR meetings. In Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery. https://doi.org/10.1145/3706598.3714078

Chen, X., Yap, N., Lu, X., Gunal, A., & Wang, X. (2025). MeetMap: Real-time collaborative dialogue mapping with LLMs in online meetings. Proceedings of the ACM on Human-Computer Interaction, 9, 1-35. https://doi.org/10.1145/3711030