Building VR That Actually Responds to Your Emotions

In simple terms: CAEVR is a VR system that reads your body's signals (heart rate, skin conductance, brain activity) to understand how you're feeling, then changes the experience in real-time to match. If you're getting too stressed, it eases up. If you're engaged, it deepens the experience. It's VR that truly listens to you.

🎯 Key Takeaways

- 78% accuracy in detecting emotional states from biosignals in real-time

- Significantly increased positive emotions compared to non-adaptive VR experiences

- Higher empathy scores - users reported feeling more connected to virtual characters

- Real-world applications - particularly promising for healthcare training and therapy

The Insight That Changed Everything

Virtual Reality has achieved remarkable visual fidelity. Modern headsets can render photorealistic environments at smooth frame rates. But I kept noticing something was missing.

Two people could have the exact same VR experience-see the same visuals, hear the same audio-yet come away with completely different emotional responses. One person might be deeply moved; another might be bored or overwhelmed.

The problem? VR treats everyone the same. It doesn't know-or care-how you're actually feeling.

Working with Kunal Gupta and our research team, we asked a simple question: What if VR could adapt to your emotional state in real-time?

That question became CAEVR.

How CAEVR Works

CAEVR (Context-Aware Empathy in VR) continuously monitors several physiological signals:

What We Measure

- Heart rate and heart rate variability (HRV) - indicators of emotional arousal and stress

- Skin conductance (EDA/GSR) - reflects engagement and emotional intensity

- EEG signals - 14 channels capturing cognitive state and emotional processing

- Movement patterns - body language in virtual space

How We Process It

Our emotion recognition pipeline runs in real-time:

- Signal Acquisition: Sensors capture raw physiological data

- Feature Extraction: We compute time-domain and frequency-domain features

- Emotion Classification: A deep learning model maps features to the valence-arousal emotional space

- Adaptive Response: The VR environment changes based on detected emotional state

What Changes in the Experience

When the system detects different emotional states, it can adjust:

- Lighting and atmosphere - warmer tones for calm, cooler for tension

- Pacing - slowing down when you're overwhelmed, maintaining engagement when you're in flow

- Narrative elements - adjusting how virtual characters respond to you

- Audio design - music, ambient sounds, and silence

The key is subtlety. You shouldn't notice the adaptation-you should just feel like the experience fits you.

Why This Matters for Empathy

Here's where it gets interesting. CAEVR isn't just about making VR more comfortable-it's about making it more transformative.

Empathy training is crucial in fields like healthcare, social work, and education. We need doctors who can understand patient fear. Social workers who can connect across difference. Teachers who can sense when students are struggling.

Traditional empathy training is hit-or-miss. You can't force someone to feel empathy. But you can create conditions where empathy emerges naturally.

CAEVR does this by:

- Meeting you where you are - adapting to your current emotional state rather than demanding you reach some ideal state

- Creating resonance - when the experience responds to you, you become more open to it

- Preventing overwhelm - emotional overload shuts down empathy; CAEVR keeps you in the zone where growth happens

Imagine training medical students with VR patient scenarios that intensify or ease based on their stress levels. A student who's getting overwhelmed gets a gentler experience. A student who's disengaged gets more emotional intensity. Each person gets the training that works for them.

The Technical Deep Dive

For those interested in the engineering:

Emotion Recognition Model

We trained a deep neural network on valence-arousal classification:

- Input: Multi-modal feature vectors (EEG power bands, HRV metrics, EDA features)

- Architecture: LSTM layers to capture temporal dynamics, followed by dense classification layers

- Output: Continuous valence and arousal predictions

- Performance: 78% accuracy on held-out test data

The model runs on-device with <50ms latency, enabling truly real-time adaptation.

Adaptation Engine

The adaptation engine maps emotional states to environment parameters:

- Rule-based layer: Basic mappings (high stress → softer lighting)

- Learning layer: Personalized adjustments based on individual responses

- Context layer: Different adaptation strategies for different narrative moments

We found that purely algorithmic adaptation felt artificial. Adding context-awareness-knowing what was happening in the story, not just how you were reacting-made the adaptation feel intuitive.

What We Found in User Studies

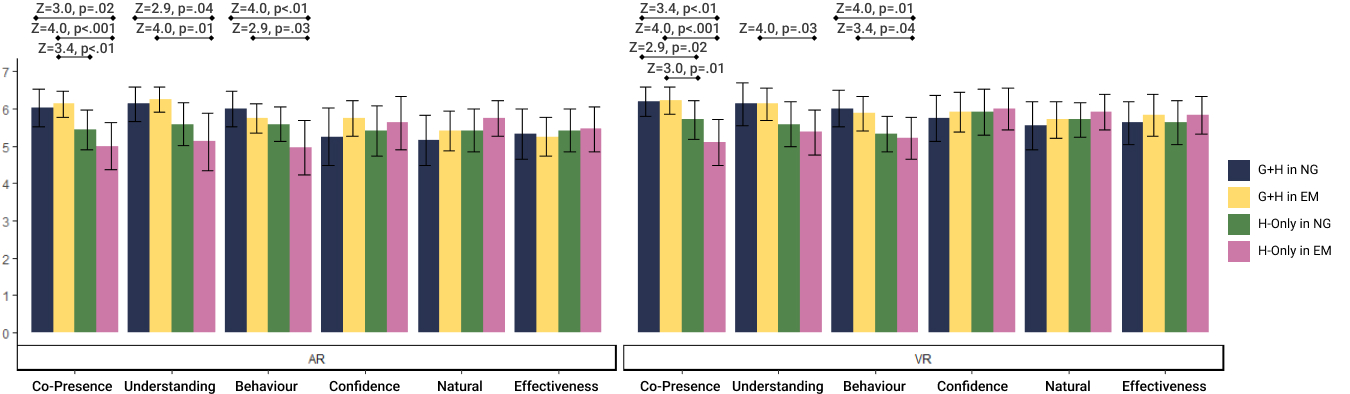

We conducted a controlled study comparing CAEVR to non-adaptive VR. The results were striking:

Emotional Response

- Significant increase in positive emotions in the CAEVR condition

- Reduced negative emotional responses, particularly anxiety and frustration

- More consistent engagement across the experience (less "checking out")

Empathy Outcomes

- Higher cognitive engagement with virtual characters

- Increased self-reported empathy toward the virtual character

- Better recall of emotional aspects of the experience

User Preferences

- When debriefed, most participants couldn't articulate what was different about CAEVR

- But they consistently rated it as feeling more "natural" and "personal"

- This suggests successful adaptation: the technology became invisible

The Bigger Picture

CAEVR is part of a broader vision: technology that understands and responds to human emotional states. This isn't about manipulation-it's about responsiveness.

Think about how a good friend or therapist responds to you. They pick up on subtle cues. They adjust their approach based on how you're doing. They meet you where you are.

What if our technology could do the same?

📚 Personal Reflections: What I Learned

Building CAEVR taught me several things that continue to shape my research:

True Immersion Is About Resonance

Before this project, I thought immersion was about sensory fidelity-higher resolution, wider field of view, more realistic graphics. I was wrong.

True immersion happens when an experience resonates with your inner state. When technology responds to how you actually feel, it stops feeling like technology. The boundary between self and experience softens.

The Best Technology Disappears

Our best user study results came when participants couldn't explain why they preferred CAEVR. The adaptation was working, but it was invisible.

This taught me that the goal isn't impressive technology-it's technology that serves the human experience so well that you forget it's there.

Empathy Can Be Designed

I used to think empathy was purely an individual trait-you either have it or you don't. CAEVR showed me that empathy can be cultivated through well-designed experiences.

This has profound implications. If we can create environments that reliably foster empathy, we have a powerful tool for addressing some of society's deepest challenges.

Biosignals Are Windows, Not Mirrors

Physiological signals don't tell you exactly what someone is feeling-they provide clues. The same heart rate pattern might indicate excitement or anxiety depending on context.

Building CAEVR taught me humility about what technology can and can't know about human experience. The goal isn't perfect emotional recognition-it's responsive, adaptive systems that gracefully handle uncertainty.

Impact and What's Next

CAEVR was published in IEEE TVCG (Transactions on Visualization and Computer Graphics), presented at IEEE VR 2024-one of the top venues for VR research.

Since publication, we've seen interest from:

- Healthcare training programs exploring empathy-based medical education

- Therapy researchers investigating adaptive VR for anxiety and trauma

- Game designers interested in emotionally responsive narratives

I'm now exploring how CAEVR's principles can extend beyond VR into everyday computing. What if your phone knew when you were stressed and adjusted its notifications? What if your work applications understood your cognitive load and helped you manage it?

The future isn't about smarter devices-it's about devices that understand us.