Turning Your Hand Into a Touch Controller

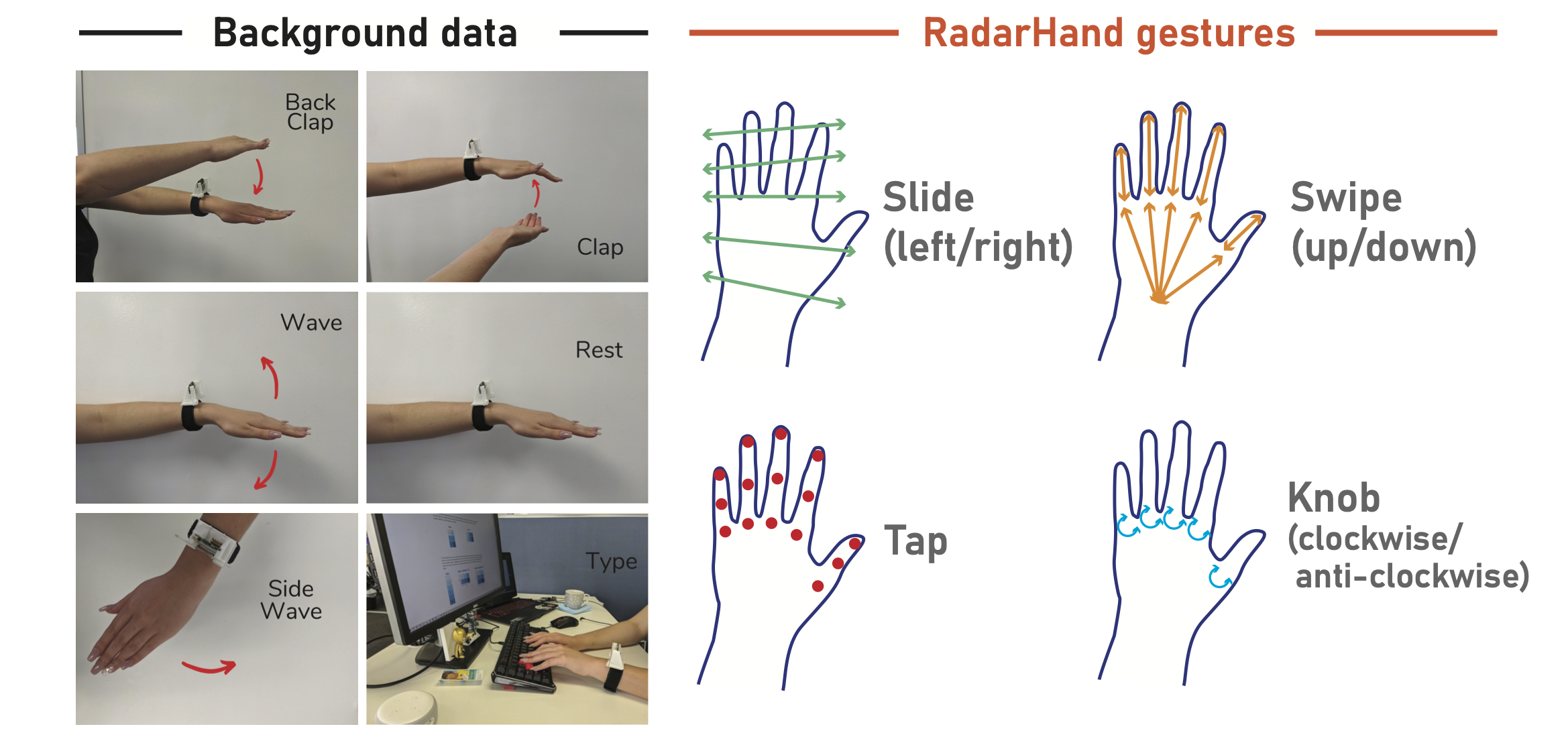

In simple terms: RadarHand is a smartwatch that uses radar (the same technology in car collision detection) to sense when and where you touch your own hand. Touch your thumb to your index finger for one action, touch your wrist for another. Your hand becomes a controller that's always with you, no extra devices needed.

🎯 Key Takeaways

- 92% accuracy detecting 7 different touch gestures on the hand

- Privacy-preserving - radar doesn't capture images, unlike cameras

- Works through clothing and in any lighting conditions

- Leverages proprioception - your body's natural sense of where your hands are

- Part of Google ATAP collaboration - building on Project Soli radar technology

The Journey to This Paper

RadarHand represents the culmination of years of work on radar-based interaction, starting from my collaboration with Google ATAP (Advanced Technology and Projects) and extending through my Master's thesis.

This paper, published in ACM TOCHI (one of the most prestigious HCI journals), took our earlier CHI work on proprioceptive gestures and transformed it into a practical wearable system. The journey from "cool demo" to "usable device" taught me more about HCI than any textbook could.

Why Radar? (And Why Not Cameras?)

When I tell people we use radar for gesture recognition, the first question is usually: "Why not just use a camera?"

Cameras seem simpler, and computer vision has made incredible advances. But for on-body interaction, radar has several crucial advantages:

1. Privacy

Radar doesn't capture identifiable images. It sees shapes and motion, but not faces or details. For a wearable that you'd use throughout your day, this matters enormously.

2. Robustness

Cameras struggle with:

- Low light or bright sunlight

- Occlusion (when something blocks the view)

- Fast motion (blur)

Radar works consistently in any lighting, through thin clothing, and can track motion at millisecond precision.

3. Power Efficiency

Camera systems require significant processing power for real-time image analysis. Our radar approach uses a fraction of the energy, essential for battery-powered wearables.

4. Precision

FMCW (Frequency Modulated Continuous Wave) radar can detect sub-millimeter movements. We can tell the difference between a light tap and a firm press, between touching your thumb and touching your wrist.

The Proprioception Insight

Here's what makes RadarHand truly unique: we designed the system around proprioception-your body's sense of its own position in space.

Close your eyes and touch your thumb to your pinky. You don't need to look; you know where your fingers are. This is proprioception, and it's incredibly precise for certain regions of your body.

RadarHand leverages this existing human ability. Instead of learning complex gestures, you use touch points your body already knows. The gesture vocabulary feels natural because it's built on how your body already works.

What We Discovered About Proprioceptive Accuracy

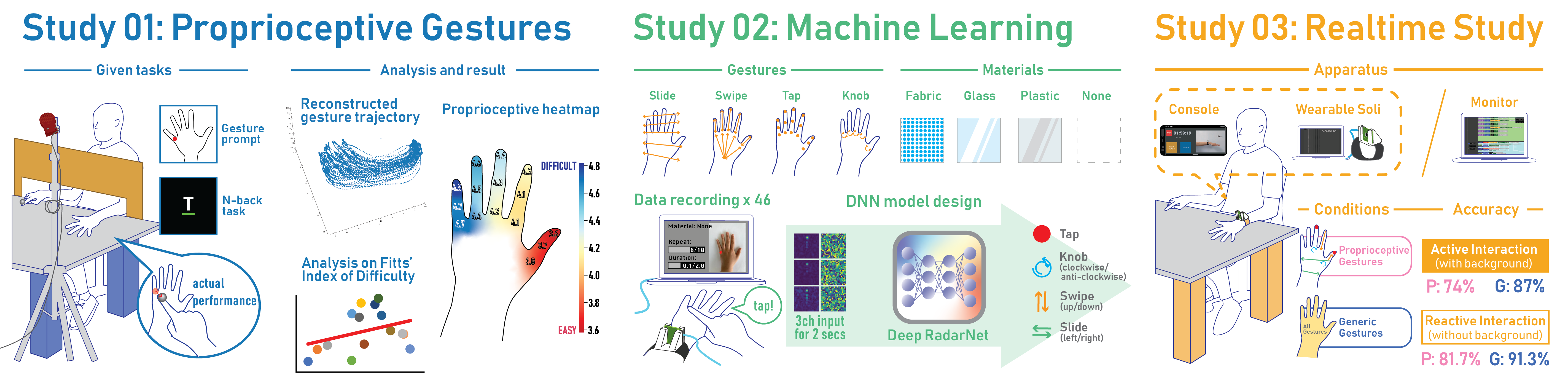

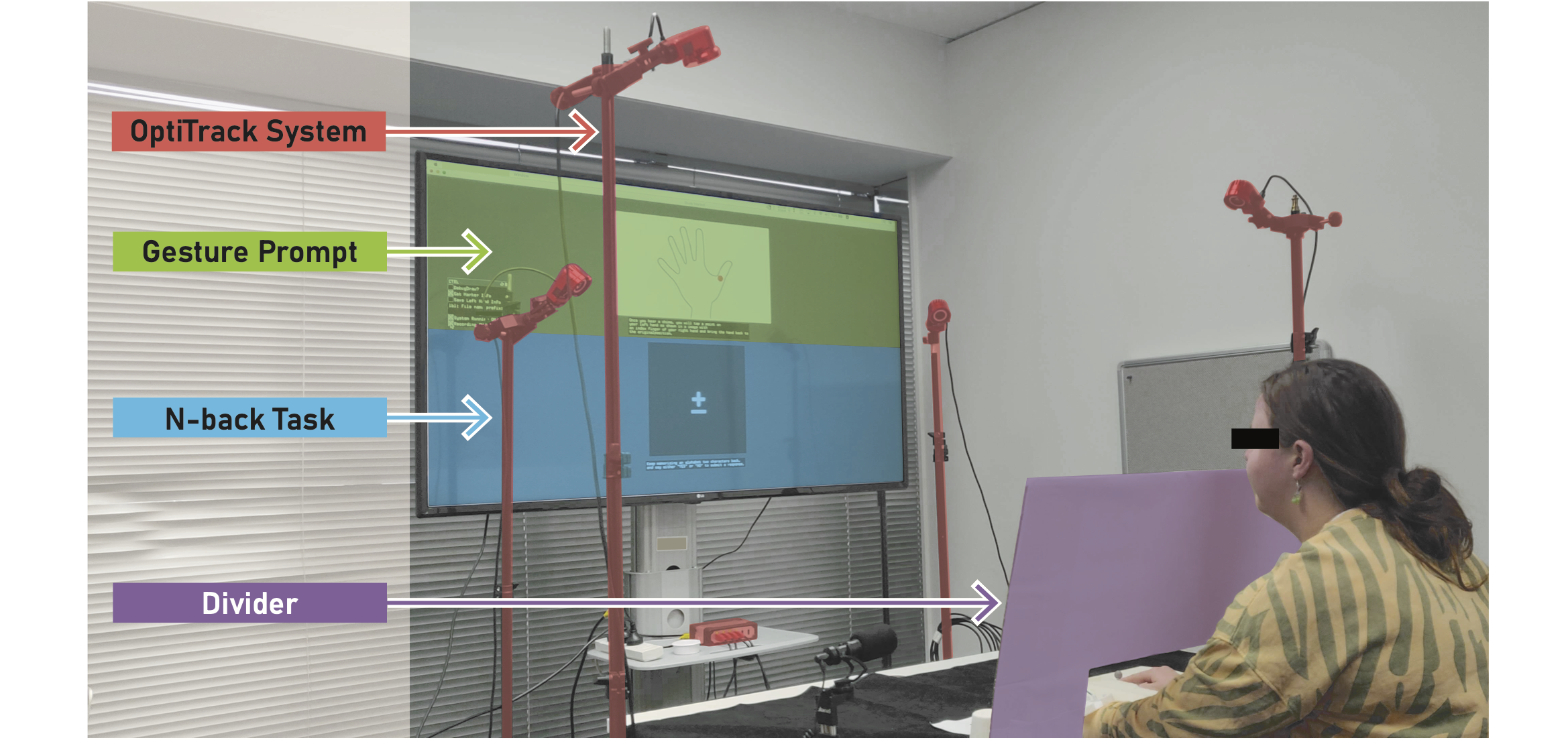

We conducted a comprehensive study with 24 participants to map proprioceptive accuracy across the back of the hand:

| Region | Proprioceptive Accuracy |

|---|---|

| Thumb area | Highest (most accurate) |

| Index finger | Second most accurate |

| Middle finger | Moderate |

| Ring finger | Lower accuracy |

| Pinky area | Lowest (least accurate) |

This directly informed our gesture design. Critical actions (like answering a call) use thumb-based gestures. Less critical actions can use regions with lower proprioceptive accuracy.

The Technical Challenge: Human Variability

The hardest part of RadarHand wasn't the radar signal processing-it was the human variability.

Every person's hand:

- Moves differently (speed, trajectory, pressure)

- Has different proportions (finger length, hand size)

- Exhibits different gesture habits (how they tap, where exactly they touch)

Building a system that works reliably across this variability required:

- Extensive user studies - understanding the full range of human variation

- Adaptive algorithms - models that generalize across individuals

- Thoughtful gesture design - avoiding gestures that work for some people but not others

Our deep learning model achieves:

- 92% accuracy for 7 generic gestures

- 93% accuracy for 8 discrete gestures

- 87% real-time accuracy in active interaction conditions (while walking, etc.)

These numbers required thousands of hours of data collection and countless iterations on the model architecture.

Design Guidelines We Discovered

From our three user studies, we derived guidelines that any wearable gesture system should consider:

1. Design Around Proprioception

Proprioceptive accuracy decreases from thumb to pinky. Place critical functions in regions where users can reliably target without looking.

2. Prefer Discrete Over Continuous Gestures

Gestures with clear start and end points (touch-and-release) perform better than continuous gestures (sliding). Users are more consistent, and the system can more reliably detect completion.

3. Provide Tactile Feedback

Users strongly prefer some form of confirmation when a gesture is recognized. Haptic feedback (vibration) works well, but even audio feedback helps.

4. Reserve Best Regions for Critical Functions

Emergency functions (like calling for help) should use the most proprioceptively accurate regions (thumb area). Less critical functions can use less accurate regions.

What This Means for the Future

RadarHand points toward a future where wearables understand not just what you do, but how your body moves.

Current smartwatches mostly detect discrete button presses or simple gestures like wrist rotations. RadarHand expands this to a rich vocabulary of touch-based interactions, all sensed by a device already on your wrist.

Imagine:

- Silently controlling music by tapping your thumb during a meeting

- Answering calls with a gesture while your phone is in your pocket

- Accessibility applications for users who can't easily interact with touchscreens

- VR/AR input using your hand as an always-available controller

The Google ATAP Connection

I'm proud that this research emerged from collaboration with Google ATAP's Project Soli team. Their pioneering work on miniature radar for gesture sensing opened up possibilities that didn't exist a few years ago.

Working with industry partners while maintaining academic rigor taught me how to bridge the gap between research and real-world application. The techniques we developed are now informing next-generation wearable designs.

📚 Personal Reflections: What I Learned

Technology Should Amplify Human Abilities

RadarHand works with the body, not against it. We didn't ask users to learn arbitrary gestures-we designed around how bodies already work.

This principle now guides all my research: the best technology amplifies existing human abilities rather than demanding we develop new ones.

The Gap Between "Working" and "Reliable" Is Vast

I had a working radar gesture detector early in the project. Getting it to work reliably across different people, different lighting, different activities-that took years.

This gap is where most research projects die. Crossing it requires patience, rigor, and willingness to collect far more data than seems necessary.

User Studies Reveal Surprises

I thought I understood how people use their hands. The user studies proved me wrong constantly. People touch in ways I hadn't predicted. They have difficulty with gestures I thought were easy. They excel at gestures I thought were hard.

Never assume you understand users. Always test.

Cross-Disciplinary Work Is Hard but Essential

RadarHand required expertise in radar signal processing, machine learning, human factors, wearable design, and product thinking. No single person has all these skills.

Learning to collaborate across disciplines-to speak enough of each other's language to communicate effectively-was one of the most valuable skills I developed.

The Legacy

This work continues to influence my research. The core principle-use technology that works with human abilities rather than requiring new ones-guides how I think about AI augmentation, affective computing, and collaborative systems.

When I design a system now, I always ask: What existing human capacities can we leverage? What can the body already do that technology could enhance?

RadarHand showed me that the most powerful technology isn't the most complex-it's the technology that fits seamlessly into how humans already live.