Paper2Rap: Building a Research-to-Rap Studio at 35,000 Feet

I built Paper2Rap because I kept running into the same tension: research papers hold incredible ideas, but the format is still too dense, too static, and too easy to ignore unless you already live inside academia.

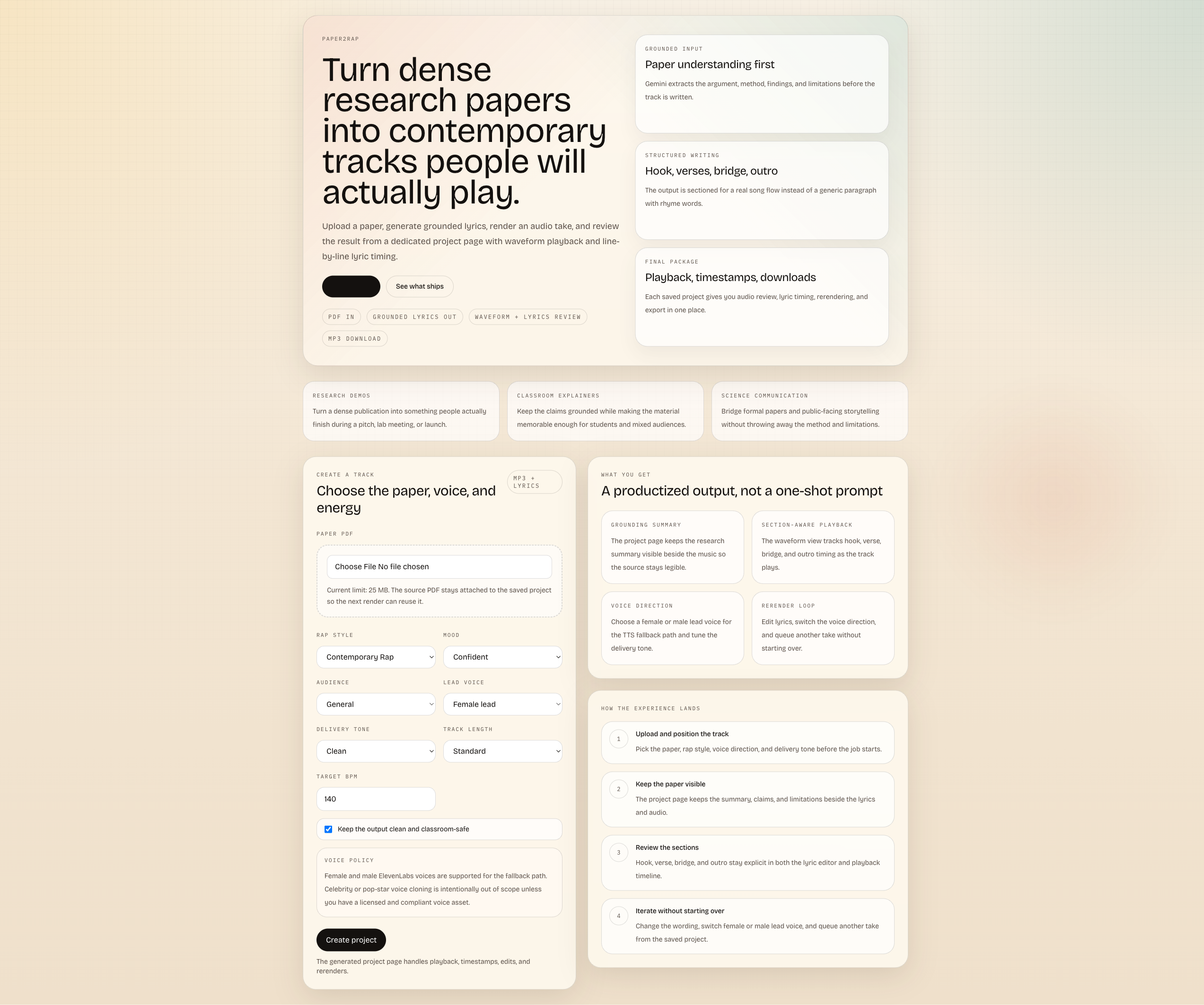

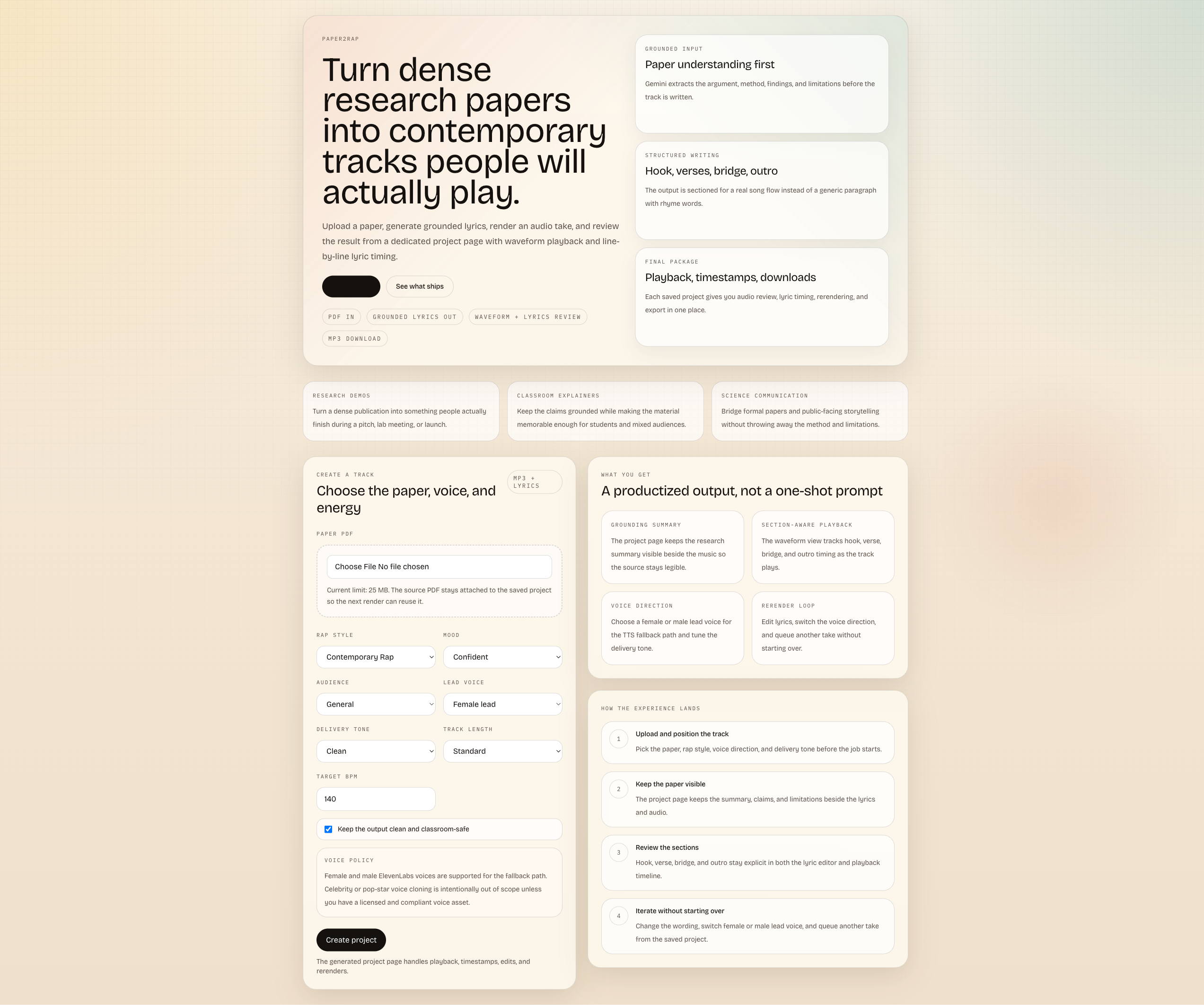

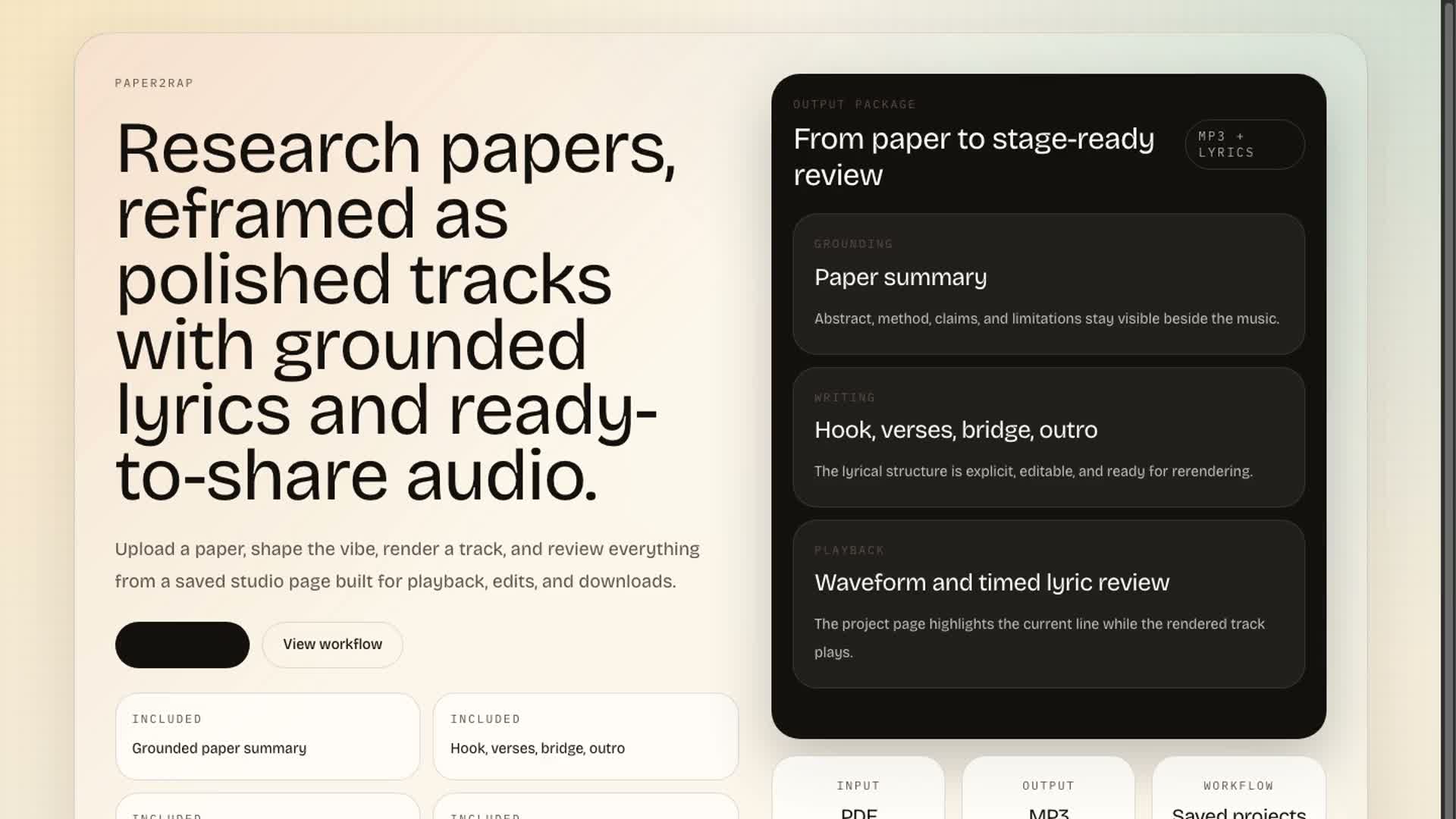

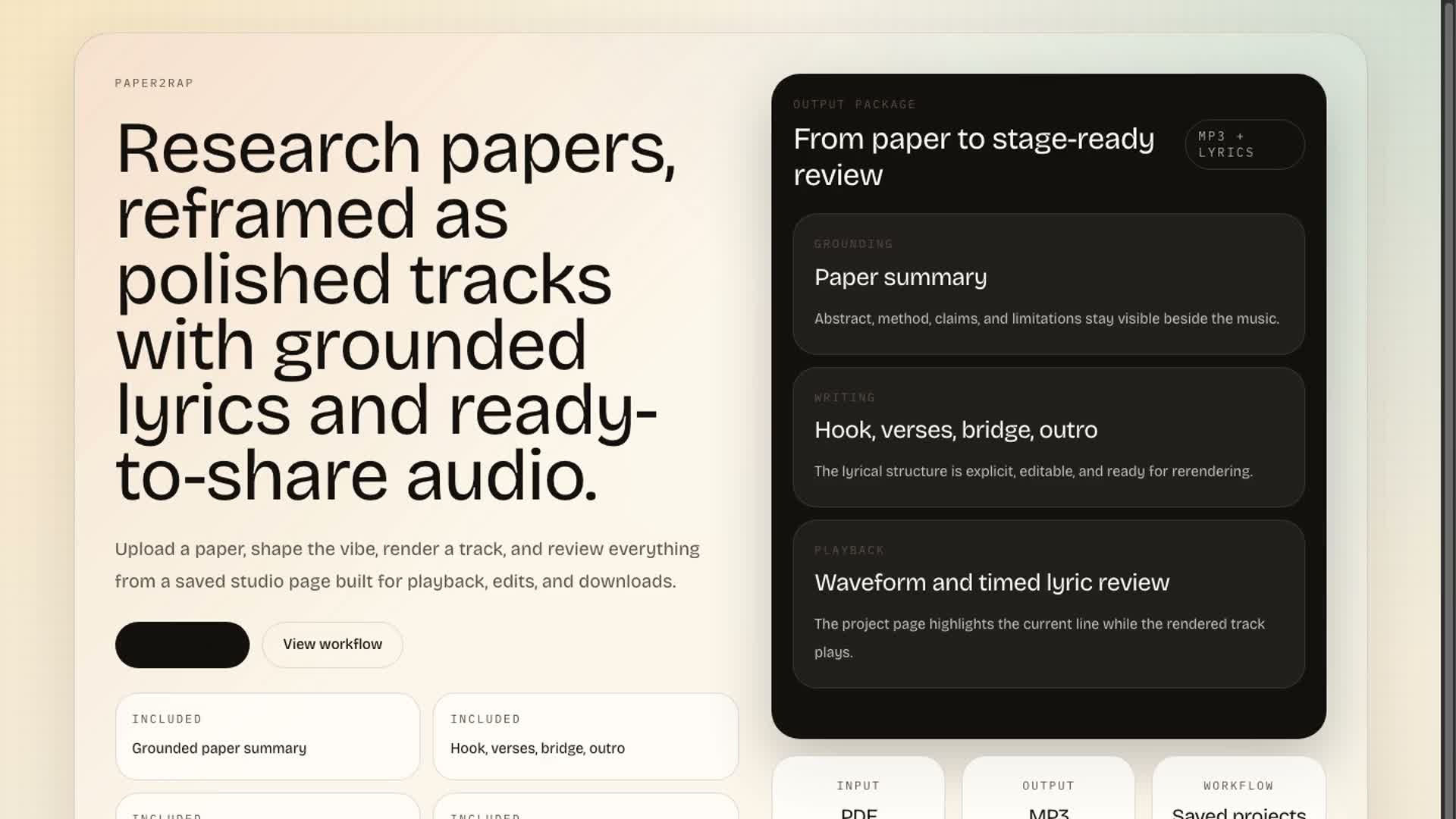

So I asked a strange but serious question: what if a paper could become a track? Not a joke summary. Not random rhymes. An actual workflow that reads a PDF, extracts grounded claims, writes sectioned lyrics, renders audio, and gives you a saved project page where the paper, the lyrics, and the song all stay tied together.

The first real version came together during a flight. That constraint shaped the whole product. I did not want a fragile demo built out of five cloud services and a prayer. I wanted one app, one durable flow, and one result page that still made sense after landing.

You can explore the code in the Paper2Rap GitHub repository.

The Core Idea

Paper2Rap turns a research paper into a creative artifact people can actually replay.

The current app flow is simple:

- Upload a PDF.

- Extract the paper into structured claims, methods, findings, and limitations.

- Draft lyrics that stay grounded in the source.

- Render a track.

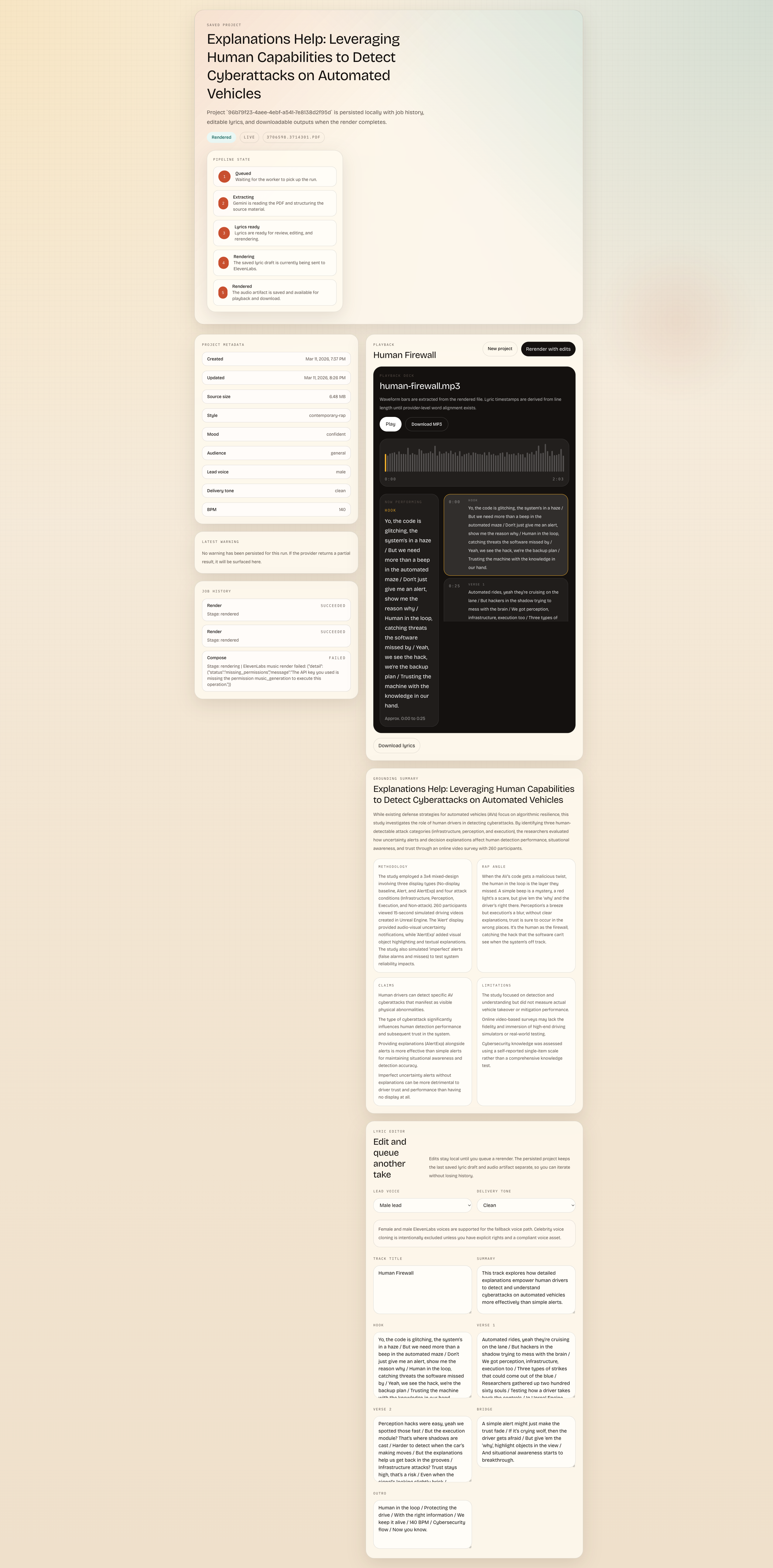

- Review the result in a saved project page with waveform playback, lyric timing, editing, rerendering, and downloads.

That product spine matters more than the gimmick. I was never trying to make a joke app. I wanted research communication to become something people might actually replay.

Demo

The embedded intro below comes from the Paper2Rap marketing build in the repo. The longer workflow video uses a render based on my CLARA paper, which you can read about here.

If you want the full walkthrough, here is the longer workflow demo.

The Shipped Stack

The version currently checked into the repo is the online/cloud-backed path:

| Layer | Current implementation | Why it worked |

|---|---|---|

| App shell | Next.js App Router | One codebase for UI and API routes |

| Persistence | local node:sqlite + local artifact storage | Durable enough for a serious MVP without infra overhead |

| Extraction + lyric drafting | Gemini document understanding + structured outputs | Good PDF understanding, predictable JSON, and fast iteration |

| Music render | ElevenLabs Music first | Best path when the key has music access |

| Audio fallback | ElevenLabs TTS + FFmpeg beat bed | Keeps the product usable even when music generation is unavailable |

| Job execution | in-process worker | Fastest way to make project pages durable without a separate queue service |

That last point is important. I did not begin by optimizing for perfect architecture. I optimized for a complete vertical slice:

- upload the paper

- save the project immediately

- keep the source file and metadata

- survive provider failures

- come back to a project later and rerender it

That is why the repo still uses a single-service deployment today. I would rather have one version that holds together than a fake "scale-ready" architecture that collapses in demos.

While researching the current tooling for this post, I found something useful: the ecosystem has moved since I first built the app.

Google's current Gemini docs explicitly support document understanding for PDFs and JSON-schema-based structured outputs, which is almost exactly the pattern Paper2Rap needs for grounded paper extraction. On the music side, ElevenLabs now has a public POST /v1/music compose endpoint in the API reference, and ElevenLabs announced on March 6, 2026 that Eleven Music is available in the API. Some overview pages still lag behind and say API access is "coming soon," so this is one of those cases where the implementation docs are ahead of the marketing copy.

That matters because it means the product direction is no longer speculative. The cloud path is now even more straightforward to harden than it was when I started.

I Built Both Paths

One thing I care about deeply is not locking the idea to a single online stack.

By this point, I have already implemented both ways of thinking about the product:

| Mode | Status | Model path |

|---|---|---|

| Online mode | Working, and still the best overall | Gemini for extraction and lyric drafting, ElevenLabs for music or voice render |

| Local mode | Also implemented, and working nicely | Ollama running Qwen3-VL for multimodal paper understanding and Qwen3 for rewriting/lyric shaping, plus local MusicGen for offline music generation |

I did not want to jam both modes into one confused system prompt. I wanted the product surface to stay the same while the provider layer swapped underneath:

- same project page

- same saved artifacts

- same lyric review flow

- same rerender loop

- different inference backend depending on whether I need speed, cost control, privacy, or offline resilience

That design choice came directly from building under travel constraints. If your product only works when every cloud dependency behaves perfectly, it is not durable enough.

After building both, the conclusion was pretty straightforward:

- the local version works

- the online version is still better

- the user experience should stay the same either way

The local path gives me privacy, control, and resilience. I like it a lot. It is satisfying to see the whole thing run through Ollama, Qwen, MusicGen, and local audio tooling without depending on external providers.

But if I am being honest about output quality, the online stack is the best version right now. Gemini is still stronger for paper extraction and grounded structuring, and ElevenLabs still gives me the cleaner, more production-ready render path.

That is the version I trust most when I want the sharpest demo, the cleanest results, and the most convincing end-to-end experience.

Why I Still Built the Local Version

I still wanted the local version for real reasons:

- private paper processing for drafts I do not want to send to the cloud

- lower marginal cost once the local stack is set up

- more resilience when connectivity is weak or unavailable

- tighter experimentation with prompts, lyric constraints, and render retries

The architecture I implemented looks like this:

Ollamahostsqwen3-vlfor multimodal paper understanding andqwen3for lyric drafting and rewriting.- A local adapter converts the extracted structure into the same app-owned schema the Gemini path already uses.

MusicGenhandles beat or music generation locally.- The existing project page stays the main review surface for lyrics, playback, downloads, and rerendering.

That means the real product value is not any single model. It is the workflow contract:

- the paper goes in

- structure is extracted

- lyrics are grounded

- audio is rendered

- the project remains durable

If that contract is strong, models can change without the product losing its identity.

How I Implemented It

After going back through the current docs, I realized the implementation choices I made were even more aligned with the ecosystem than I expected. If I describe the build honestly, this is how I implemented Paper2Rap in practice.

Cloud Path

For the online mode, I kept the flow simple:

- Upload the paper to Gemini's file API.

- Ask Gemini to extract the paper into a strict JSON schema.

- Turn that structured paper representation into a lyric package.

- Render music with ElevenLabs using either a direct prompt or a composition plan.

- Store the result and keep the project page durable.

The reason this path works so well is that the tooling aligns cleanly with the product:

- Gemini's PDF/document understanding flow already supports file upload plus model calls over the processed document.

- Gemini's structured outputs support JSON schema, which is exactly what I want for paper metadata, claims, findings, limitations, and lyric-plan scaffolding.

- ElevenLabs now exposes composition plans and music composition, which maps well to a sectioned song made from a paper.

At a high level, the TypeScript shape I wanted to preserve across both modes looks like this:

type PaperInsights = {

title: string;

abstractSummary: string;

methodology: string;

keyClaims: string[];

findings: string[];

limitations: string[];

rapAngle: string;

};

type LyricsDraft = {

trackTitle: string;

hook: string[];

verse1: string[];

verse2: string[];

bridge?: string[];

outro?: string[];

};The important engineering move is not just calling models. It is forcing both providers to return app-owned shapes instead of letting provider-specific responses leak through the whole codebase.

Local Path

The local path is where the project became more personal for me.

For PDF-heavy papers, I did not want to rely on a text-only local model. I leaned toward Qwen3-VL on Ollama, because the current model family is explicitly positioned for text-plus-image work, long context, OCR, and technical documents. Then I used the idea behind structured outputs in Ollama to constrain the extraction result to the same schema as the Gemini path.

In other words:

qwen3-vlfor reading the paper like a multimodal documentqwen3for lyric drafting, rewriting, and style transformsMusicGenvia AudioCraft for offline musicffmpegfor packaging, normalization, and final MP3 assembly

That local pipeline works like this:

// 1. Convert PDF pages to images or extract structured page assets.

// 2. Send those pages to qwen3-vl through Ollama with a JSON schema.

// 3. Ask qwen3 to turn the extracted paper insights into sectioned lyrics.

// 4. Feed a beat prompt into MusicGen.

// 5. Mix vocals and beat with ffmpeg.

// 6. Save the result into the same Paper2Rap project model.The user sees one product. Under the hood, there are two different engines. That was always the goal.

Why I Like This Split

The cloud path gives me:

- stronger out-of-the-box PDF handling

- lower local hardware requirements

- faster setup for demos and collaborators

The local path gives me:

- privacy for sensitive papers

- resilience when the internet is unstable

- better control over experimentation

- a version of the product that can travel with me

That last point matters more than it sounds. I built the spirit of this app while travelling. A tool that only works under ideal networked conditions would betray the original reason I started it.

Still, if someone asks me which version I would open in front of people right now, I would pick the online one. It is simply stronger. The extraction is better. The render is cleaner. The whole flow feels more polished.

Why This Matters to Me

I do not want to build AI products that only work in ideal demo conditions.

I want tools that survive messy reality:

- unstable internet

- incomplete API permissions

- long travel days

- limited budgets

- papers that need privacy

Paper2Rap started from a playful premise, but the deeper engineering question is serious: can a creative AI system stay useful when its dependencies change?

So for me, the online/local split is not just implementation detail. It is part of what the product is trying to be.

What Got Stuck

Every interesting build gets blocked somewhere. Paper2Rap was no exception.

1. Music generation permissions were not guaranteed

The clean path was: extract the paper, write the lyrics, send them to ElevenLabs Music, get back a proper track.

Reality was messier. If the API key does not have music_generation access, the render path fails. So I added a fallback chain:

- Try ElevenLabs Music.

- If that is unavailable, switch to ElevenLabs TTS.

- If TTS succeeds, use

ffmpegto mix a beat bed under the vocal so the user still gets anmp3.

That decision was not glamorous, but it made the app robust.

2. Lyric timing is still heuristic

Provider-level word alignment metadata is not available in the current path, so the lyric sync uses audio duration plus relative line length. That is good enough for review and demos, but not for sample-accurate captioning.

This is one of those product details that looks small until you build it. A raw audio element is not enough. Once you promise a playable song, the user expects timing, structure, jumping, highlighting, and confidence in what they are hearing.

3. The MVP architecture is intentionally not the final architecture

The in-process worker and local storage are the reason the app exists in a stable form today, but they are also the first scaling ceiling:

- restarting the server interrupts active jobs

- SQLite and local artifacts are tied to one machine

- there is no real distributed queue yet

I am fine with that tradeoff for this stage. It let me get the core experience right first.

4. Research fidelity versus musicality is a real tension

If you push too hard toward academic fidelity, the lyrics become stiff.

If you push too hard toward musicality, the paper disappears under style.

Paper2Rap lives in that tension. The best output is not the most literal one. It is the one that keeps the paper's core claims intact while becoming memorable enough to replay.

Why Building It on a Flight Actually Helped

Building on a flight forced clarity.

No endless tool-switching. No architecture astronautics. No pretending I had weeks to design the ideal cloud platform. I had to make sharp decisions:

- keep the stack narrow

- make projects durable immediately

- isolate provider logic

- preserve the source paper

- make failure states survivable

That pressure produced a better MVP. It turned Paper2Rap into a product with a backbone instead of a flashy one-click demo.

In a weird way, the travel constraint pushed me toward the part of engineering I trust most: finish the end-to-end path first, then harden the seams.

There is also something psychologically useful about building in transit. A flight gives you a temporary world with fewer inputs. No errands. No meetings. No infinite browser wandering. Just the problem, the notebook in your head, and the version you can finish before landing.

Paper2Rap carries that feeling. It is a project shaped by movement, constraint, and urgency. Maybe that is why I like the idea of the local version so much too. It matches the original spirit of the build: make something that can travel with you.

The Journey Behind the Build

This project sits at the intersection of several things I care about:

- HCI and how people actually engage with information

- creative AI systems that do more than summarize

- research communication beyond the PDF

- products that can shift between cloud power and local control

That is why Paper2Rap still matters to me. It is playful, yes, but I do not think it is shallow. It is really a question about whether formal knowledge can become performable, memorable, and shareable without being distorted on the way.

Part of my journey as a builder has been learning that the best projects usually begin as slightly unreasonable questions. Paper2Rap definitely belongs in that category. On paper, it sounds absurd: upload a research PDF and get a rap track back. But when I kept pulling at the thread, the idea became more serious, not less.

Research communication is still trapped in formats that reward specialists and filter out everyone else. I do not think every paper should become a song. But I do think building something like Paper2Rap forces a useful design question:

What is the most memorable form a piece of knowledge can take without becoming dishonest?

That question is bigger than this app. It touches education, accessibility, public scholarship, science communication, and how we design AI systems that transform meaning instead of merely compressing text.

I also like that it exposed the uncomfortable engineering questions early:

- What counts as a faithful transformation of a paper?

- When should the system refuse to over-dramatize?

- How much grounding is enough before a creative artifact becomes misleading?

- When should the local path be preferred over the cloud path?

Those are the right questions for this kind of product.

And maybe that is what I enjoy most here: Paper2Rap is not finished, but it already reveals the right problems. That is usually the sign of a project worth continuing.

What I Would Build Next

The repo already points toward the next engineering steps, and I agree with that direction:

- Move job execution into a dedicated queue and worker service.

- Replace local-only storage with Postgres plus object storage.

- Persist lyric revision history instead of only the latest version.

- Add better telemetry for provider latency, failure stage, and cost.

- Finish the local-compatible path with Ollama + Qwen3 + MusicGen so the same product can run with far less cloud dependency.

The important thing is that the core experience is already there. The app can take a PDF, keep the project durable, and return a grounded audio artifact with a review surface that feels like a real product.

That is enough of a spine to keep building on.

Closing

Paper2Rap started as a question I could not shake: can research become something people feel, replay, and remember?

The current answer is a working system built around Gemini, ElevenLabs, SQLite, local artifacts, and a stubborn refusal to let provider failures kill the user experience. I also built a local version around Ollama, Qwen, and MusicGen, and it works nicely. But if I am honest, the online version is still the best one today.

Either way, the mission stays the same: make academic ideas performable without flattening what made them worth reading in the first place.

For now, that idea lives in a repo, a product demo, a few rendered tracks, and one long memory of building on a flight.

That is enough to keep going.